How To Deploy a Frontend on Kubernetes?

Kubernetes (commonly referred to as "K8s") is an open source system that aims to provide a platform for automating the deployment, scalability and implementation of application containers on server clusters. It works with a variety of container technologies, and is often used with Docker. It was originally designed by Google and then offered to the Cloud Native Computing Foundation.

This blog post belongs to a series that describe how to use Minikube, declarative configuration files and the kubectl command-line tool to deploy Docker micro-services on Kubernetes.

It focuses on the installation of an Angular 8 frontend application served by an NGinx Ingress controller.

While being the most complex kind of Kubernetes object management, the declarative object configuration allows to apply and revert configuration updates. Also, configuration files can easily be shared or saved into a version control system like Git.

But before going to the configuration of our frontend and its proxy, let's see what is needed in order to follow this tutorial.

Prerequisites¶

Executing this blog post code and configuration samples on a local Linux machine requires:

A basic knowledge of K8s concepts is also recommended.

Install Kubernetes Command Line Client¶

First, we need to install Kubectl. I used the version 1.15:

curl -LO https://storage.googleapis.com/kubernetes-release/release/v1.15.0/bin/linux/amd64/kubectl

chmod +x ./kubectl

sudo mv ./kubectl /usr/local/bin/kubectl

The kubectl version command should display the 1.15 version.

Tip: If you think typing the

kubectlcommand is too long, you can easily create a shortcut with a symlink. For instance, create this linksudo ln -s /usr/local/bin/kubectl /usr/local/bin/kctlto typekctlinstead ofkubectl.

Install Minikube¶

You can deploy Kubernetes on your machine, but its preferable to do it in a VM when developing. It's easier to restart from scratch if you made a mistake, or try several configurations without being impacted by remaining objects. Minikube is the tool to test Kubernetes or develop locally.

Download and install Minikube:

curl -Lo minikube https://storage.googleapis.com/minikube/releases/latest/minikube-linux-amd64 \

&& chmod +x minikube

sudo install minikube /usr/local/bin

KVM and Minikube driver¶

Minikube wraps Kubernetes in a Virtual Machine, so it needs an hypervisor: VirtualBox or KVM. Since I do my tests on a small portable computer, I prefer to use the lighter virtualization solution: KVM. Follow this guide to install it on Ubuntu.

Finally, install the KVM driver:

curl -LO https://storage.googleapis.com/minikube/releases/latest/docker-machine-driver-kvm2

chmod +x docker-machine-driver-kvm2

sudo mv docker-machine-driver-kvm2 /usr/local/bin/

Then, launch Minikube using the KVM2 driver:

> minikube start --vm-driver=kvm2

😄 minikube v1.2.0 on linux (amd64)

🔥 Creating kvm2 VM (CPUs=2, Memory=2048MB, Disk=20000MB) ...

🐳 Configuring environment for Kubernetes v1.15.0 on Docker 18.09.6

🚜 Pulling images ...

🚀 Launching Kubernetes ...

⌛ Verifying: apiserver proxy etcd scheduler controller dns

🏄 Done! kubectl is now configured to use "minikube"

K8S Definitions¶

Before continuing, it is best to define some concepts that are unique to Kubernetes. If you are familiar with this solution, you can go directly to the next chapter.

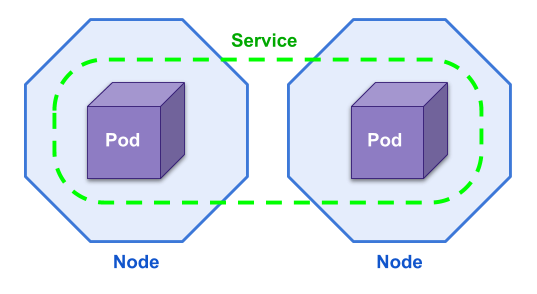

- A K8s cluster is divided in Nodes. A node is a worker machine and may be a VM or physical machine. In our case, the K8s cluster is composed of a single Node: the Minikube VM.

- When creating an application Deployment, K8s creates one or several Pods on the available nodes. A Pod is a group of one or more Docker containers, with shared storage/network, and a specification for how to run the containers.

- Theses Pods are regrouped in Services.

A Service defines a policy by which to access its targeted pods.

For example:

- A service with the type NodePort exposes the Service on each Node's IP at a static port.

From outside the cluster, the service is accessible by requesting

<NodeIP>:<NodePort>. - A LoadBalancer Service exposes the Service externally using the load-balancer of a cloud provider.

- A service with the type NodePort exposes the Service on each Node's IP at a static port.

From outside the cluster, the service is accessible by requesting

The NodePort solution would work for testing purposes but is not reliable in a production environment. And the LoadBalancer works only in the Cloud, not in a local test environment.

The solution that fits any use case is to install an Ingress Controller and use Ingress rules.

TL; DR¶

Download and extract the frontend.zip archive.

It contains several K8s configuration files for Ingress and the Angular frontend.

It also contains a Makefile. Here is an extract of this file:

start:

minikube start --vm-driver=kvm2 --extra-config=apiserver.service-node-port-range=1-30000

mount:

minikube mount ${PWD}/grafana/config:/grafana

all:

kubectl apply -R -f .

list:

minikube service list

watch:

kubectl get pods -A --watch

To launch the complete stack:

- Run

make startto start Minikube (or copy paste the command above in a terminal if you do not havemakeon your computer), - Execute

make allto launch the Ingress controller and the Frontend, - Wait for the various Pods to start (it may take some time to download the Docker images) using

make watch, - List the available services with

make list.

|---------------|----------------------|--------------------------------|

| NAMESPACE | NAME | URL |

|---------------|----------------------|--------------------------------|

| default | kubernetes | No node port |

| ingress-nginx | ingress-nginx | http://192.168.39.146:80 |

| | | http://192.168.39.146:443 |

| kube-system | kube-dns | No node port |

| kube-system | kubernetes-dashboard | No node port |

|---------------|----------------------|--------------------------------|

Open the URL of the ingress-nginx service with /administration appended: http://192.168.39.146:80/administration and check that the frontend is running and served by the NGINX proxy.

Install and Configure NGinx Ingress¶

The concept of Ingress is split in two parts:

- The Ingress Controller, it's some kind of wrapper for an HTTP proxy,

- Ingress resources/rules that expose HTTP and HTTPS routes from outside the cluster to services within the cluster, depending on traffic rules.

Motivation¶

We already use HAProxy to redirect the traffic to a specific Docker container. It's the same principle here, except that you don't configure the proxy directly but specify Ingress configuration objects. The proxy configuration is automatically updated for you by the controller.

There is one issue with Ingress though, the configuration is done using annotation that are specific to the underlying controller. So, unfortunately you cannot change the controller implementation without updating the Ingress resources.

The simpler Ingress controller is the one maintained by Kubernetes: Kubernetes NGinx, not to be confused with the one maintained by the NGINX team.

Note: I also tried to use HAproxy's Ingress Controller without success. I could not configure the URL Rewrite on this one.

Using a proxy and an Ingress controller allows us to serve multiple applications on the same hostname and port (80/443) but with different paths.

Ingress Controller Installation¶

Let's get our hands dirty and install an NGINX ingress controller.

We use the kubectl apply command.

It creates all resources defined in the given file.

This file can be on the local file system or accessed using a URL.

___Note:__ Skip directly to the next chapter if you want to expose your Ingress Controller on port 80.

So, the first command to execute automatically installs all components required on the K8s cluster:

> kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/mandatory.yaml

namespace/ingress-nginx created

configmap/nginx-configuration created

configmap/tcp-services created

configmap/udp-services created

serviceaccount/nginx-ingress-serviceaccount created

clusterrole.rbac.authorization.k8s.io/nginx-ingress-clusterrole created

role.rbac.authorization.k8s.io/nginx-ingress-role created

rolebinding.rbac.authorization.k8s.io/nginx-ingress-role-nisa-binding created

clusterrolebinding.rbac.authorization.k8s.io/nginx-ingress-clusterrole-nisa-binding created

deployment.apps/nginx-ingress-controller created

You can check what is being installed in details by downloading the configuration file.

All the related resources are deployed in a dedicated namespace called ingress-nginx.

Check that the NGinx pod is started with the following command (press CTRL + C when the container status is Running):

> kubectl get pods -n ingress-nginx --watch

NAME READY STATUS RESTARTS AGE

nginx-ingress-controller-7995bd9c47-k2wps 1/1 Running 0 2m6s

Our Ingress Controller is started, but not yet accessible externally from our K8s cluster. We need to create a NodePort Service to expose it to the outside world.

Run the following command to apply the configuration provided by the ingress-nginx project to create a NodePort service:

> kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/provider/baremetal/service-nodeport.yaml

service/ingress-nginx created

List all services in the ingress-nginx namespace:

> kubectl get services -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx NodePort 10.98.52.92 <none> 80:32112/TCP,443:30923/TCP 74s

Here we see that the port 80 is dynamically mapped to the 32112 (you will most probably get a different mapping).

Listing all exposed services using Minikube confirms that the Ingress Controller is available:

> minikube service list

|---------------|----------------------|--------------------------------|

| NAMESPACE | NAME | URL |

|---------------|----------------------|--------------------------------|

| default | kubernetes | No node port |

| ingress-nginx | ingress-nginx | http://192.168.39.12:32112 |

| | | http://192.168.39.12:30923 |

| kube-system | kube-dns | No node port |

| kube-system | kubernetes-dashboard | No node port |

|---------------|----------------------|--------------------------------|

192.168.39.12 is the IP address allocated to our Minikube VM.

You can also get it with the minikube ip command.

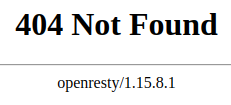

Open the URL http://192.168.39.12:32112 in a web browser, you will see a NGINX 404 page:

Our NGINX controller is responding!

Expose NodePort 80¶

By default, Kubernetes is configured to expose NodePort services on the port range 30000 - 32767. But this port range can be configured, allowing us to use the port 80 for our Ingress Controller.

Be warned though that this is discouraged.

Start by deleting our existing minikube VM with the command:

> minikube delete

🔥Deleting "minikube" from kvm2 ...

The "minikube" cluster has been deleted.

Then restart it with the option apiserver.service-node-port-range=1-30000:

> minikube start --vm-driver=kvm2 --extra-config=apiserver.service-node-port-range=1-30000

😄 minikube v1.2.0 on linux (amd64)

💡 Tip: Use 'minikube start -p <name>' to create a new cluster, or 'minikube delete' to delete this one.

🏃 Re-using the currently running kvm2 VM for "minikube" ...

⌛ Waiting for SSH access ...

🐳 Configuring environment for Kubernetes v1.15.0 on Docker 18.09.6

▪ apiserver.service-node-port-range=1-30000

🔄 Relaunching Kubernetes v1.15.0 using kubeadm ...

⌛ Verifying: apiserver proxy etcd scheduler controller dns

🏄 Done! kubectl is now configured to use "minikube"

Start the Ingress Controller and wait for it to start (kubectl get pods -n ingress-nginx --watch):

kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/mandatory.yaml

Now that we can allocate the port 80, we also need to configure the NodePort service and expose the Ingress controller on this port. First download the configuration file:

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/provider/baremetal/service-nodeport.yaml

And update it (service-nodeport.yaml), to add nodePort: 80 for the http entry and nodePort: 443 for the https one:

apiVersion: v1

kind: Service

metadata:

name: ingress-nginx

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

type: NodePort

ports:

- name: http

port: 80

targetPort: 80

protocol: TCP

nodePort: 80

- name: https

port: 443

targetPort: 443

protocol: TCP

nodePort: 443

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

Apply the updated configuration:

> kubectl apply -f service-nodeport.yaml

service/ingress-nginx created

Our Ingress Controller is now available on port 80 for HTTP and 443 for HTTPS:

> minikube service list

|---------------|----------------------|--------------------------------|

| NAMESPACE | NAME | URL |

|---------------|----------------------|--------------------------------|

| default | kubernetes | No node port |

| ingress-nginx | ingress-nginx | http://192.168.39.146:80 |

| | | http://192.168.39.146:443 |

| kube-system | kube-dns | No node port |

| kube-system | kubernetes-dashboard | No node port |

|---------------|----------------------|--------------------------------|

You may think "Why don't we expose our frontend application directly using NodePort Service?". That could also be done and it's probably the simplest solution ... for testing purpose. But it's not manageable in a production environment with several frontend applications and backends running.

Troubleshooting¶

Remember that you can list Pods with the command kubectl get pods -n ingress-nginx --watch.

- The

-nparameter specifies the namespace, here ingress-nginx which is used by the NGinx Ingress controller, - The

--watchparameter refreshes the Pods list every time a modification occurs, - Use the parameter

-Ato list resources for all namespaces.

In case your Pod is stuck with the status CreatingContainer, you can display a list of events that may let you know what is going on with the describe command:

> kubectl describe pod nginx-ingress-controller-7995bd9c47-cnjl8 -n ingress-nginx

Name: nginx-ingress-controller-7995bd9c47-cnjl8

Namespace: ingress-nginx

Priority: 0

Node: minikube/192.168.122.244

Start Time: Sun, 11 Aug 2019 17:05:05 +0200

Labels: app.kubernetes.io/name=ingress-nginx

app.kubernetes.io/part-of=ingress-nginx

pod-template-hash=7995bd9c47

Annotations: prometheus.io/port: 10254

prometheus.io/scrape: true

Status: Pending

IP:

Controlled By: ReplicaSet/nginx-ingress-controller-7995bd9c47

[...]

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 12m default-scheduler Successfully assigned ingress-nginx/nginx-ingress-controller-7995bd9c47-cnjl8 to minikube

Normal Pulling 12m kubelet, minikube Pulling image "quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.25.0"

Once a Pod is started, you can display the container logs with the command:

> kubectl logs nginx-ingress-controller-7995bd9c47-cnjl8 -n ingress-nginx

-------------------------------------------------------------------------------

NGINX Ingress controller

Release: 0.25.0

Build: git-1387f7b7e

Repository: https://github.com/kubernetes/ingress-nginx

-------------------------------------------------------------------------------

W0811 15:18:51.620335 6 flags.go:221] SSL certificate chain completion is disabled (--enable-ssl-chain-completion=false)

nginx version: openresty/1.15.8.1

W0811 15:18:51.624717 6 client_config.go:541] Neither --kubeconfig nor --master was specified. Using the inClusterConfig. This might not work.

I0811 15:18:51.624923 6 main.go:183] Creating API client for https://10.96.0.1:443

I0811 15:18:51.632485 6 main.go:227] Running in Kubernetes cluster version v1.15 (v1.15.0) - git (clean) commit e8462b5b5dc2584fdcd18e6bcfe9f1e4d970a529 - platform linux/amd64

I0811 15:18:51.719690 6 main.go:102] Created fake certificate with PemFileName: /etc/ingress-controller/ssl/default-fake-certificate.pem

E0811 15:18:51.720426 6 main.go:131] v1.15.0

Deploy an Angular 8 Frontend¶

Angular8 Frontend Docker Image¶

Check out this blog post to know more about the creation of a Docker image for an Angular app: Packaging Angular apps as docker images.

We will need to configure an URL rewrite rule in our Ingress object. Check this chapter to know more about this: How to serve multiple Angular app with HAProxy.

How to Create a Deployment?¶

Start by creating the following configuration file, named frontend-deployment.yaml:

apiVersion: apps/v1

kind: Deployment

metadata:

name: frontend-deployment

spec:

selector:

matchLabels:

app: frontend

minReadySeconds: 5

template:

metadata:

labels:

app: frontend

spec:

containers:

- image: octoperf/administration-ui:1.2.1

name: frontend

ports:

- containerPort: 80

A Kubernetes Deployment is responsible for starting Pods on available cluster Nodes. Since our Pods contain a Docker container, the file above specifies the image, name and port mapping to use.

Apply the created configuration with the command:

> kubectl apply -f frontend-deployment.yaml

deployment.apps/frontend-deployment created

Finally, check that the deployment has started one pod:

> kubectl get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

frontend-deployment 1/1 1 0 7s

Here we can see the READY 1/1 column, it's the number of Pods ready and the total that must be started.

How to Expose a Deployment with a Service?¶

Like for the Deployment, create a configuration file and apply it.

The configuration file is named frontend-service.yaml:

apiVersion: v1

kind: Service

metadata:

name: frontend-service

spec:

selector:

app: frontend

ports:

- protocol: TCP

port: 80

targetPort: 80

Apply it:

> kubectl apply -f frontend-service.yaml

service/frontend-service created

There is no type and no nodePort defined in this service. We only use it to regroup a logical set of Pods and make them accessible from inside the K8s cluster.

> kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

frontend-service ClusterIP 10.103.204.81 <none> 80/TCP 9s

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 44m

Here we can see that the PORT(S) column display only 80/TCP for the frontend-service.

Not the usual 80:30001/TCP notation for an exposed port.

How to Create an Ingress Object to Publicly Expose an App?¶

If not already done, you first need to have installed an Ingress Controller.

Then create the following configuration file named frontend-ingress.yaml:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/rewrite-target: /$2

nginx.ingress.kubernetes.io/proxy-read-timeout: "12h"

nginx.ingress.kubernetes.io/ssl-redirect: "false"

name: frontend-ingress

namespace: default

spec:

rules:

- http:

paths:

- backend:

serviceName: frontend-service

servicePort: 80

path: /administration(/|$)(.*)

Ingress resources configuration is done using annotations:

ingress.classshould always be"nginx"unless you have multiple Ingress Controllers running,rewrite-targetis used to skip the/administrationpart or the URL when forwarding requests to the frontend Docker container (URL Rewrite documentation),proxy-read-timeoutsets a timeout for SSE connections,ssl-redirectis used to deactivate Https redirection since we are not specifying a host: The default-server is called without any host, this server is configured with a self-signed certificate (Would display a big security warning in your browser).

Apply the configuration:

> kubectl apply -f frontend-ingress.yaml

ingress.extensions/frontend-ingress created

And check that it is OK:

> kubectl get ingresses

NAME HOSTS ADDRESS PORTS AGE

frontend-ingress * 80 29s

Testing the Installation¶

If you configured your Ingress Controller to be exposed on port 80, the minikube service list will display a similar result:

> minikube service list

|---------------|----------------------|--------------------------------|

| NAMESPACE | NAME | URL |

|---------------|----------------------|--------------------------------|

| default | frontend-service | No node port |

| default | kubernetes | No node port |

| ingress-nginx | ingress-nginx | http://192.168.39.162:80 |

| | | http://192.168.39.162:443 |

| kube-system | kube-dns | No node port |

| kube-system | kubernetes-dashboard | No node port |

|---------------|----------------------|--------------------------------|

You can display the port mapping with the following command otherwise:

> kubectl get services -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx NodePort 10.101.112.201 <none> 80:80/TCP,443:443/TCP 32m

Opening this URL http://192.168.39.162:80/administration (the IP address is probably different for you) in your Web browser display deployed application.

Conclusion¶

You may also be interested in my other blog post about K8s: How to deploy InfluxDB/Telegraf/Grafana on Kubernetes? or by other blog posts related to DevOps.