Tracing Slow Performance

You have built your performance test and executed the tests under load and your tests do not meet your requirements in terms of response times.

Or you are unable to execute your tests with the number of concurrent users required.

In this post we will give some insights into where you might want to start looking for the root cause of your performance issues.

These insights are very high-level, and the architecture of your application will determine which ones are of use and which are not as will the language your application is written in and the database technology it uses.

Things to consider¶

We will work our way through a number of things to consider if you see performance issues while performance testing you application.

As mentioned already these are insights and areas to consider and need to be investigated more closely while troubleshooting your application for performance related bottlenecks.

CPU¶

Let’s start with an obvious one, the amount of CPU allocated to each element of your technology stack. It is straightforward to monitor the CPU of an application whether your organisation uses one of the many instrumentation tools that exist, and they have licenses for.

Or whether you need to use the tools that are supplied with the operating system you are testing against.

perfmon on Windows¶

You can easily use, for example, Perfmon on Windows: From a command prompt enter perfmon and press return

Perfmon will start, assuming you have the correct permissions.

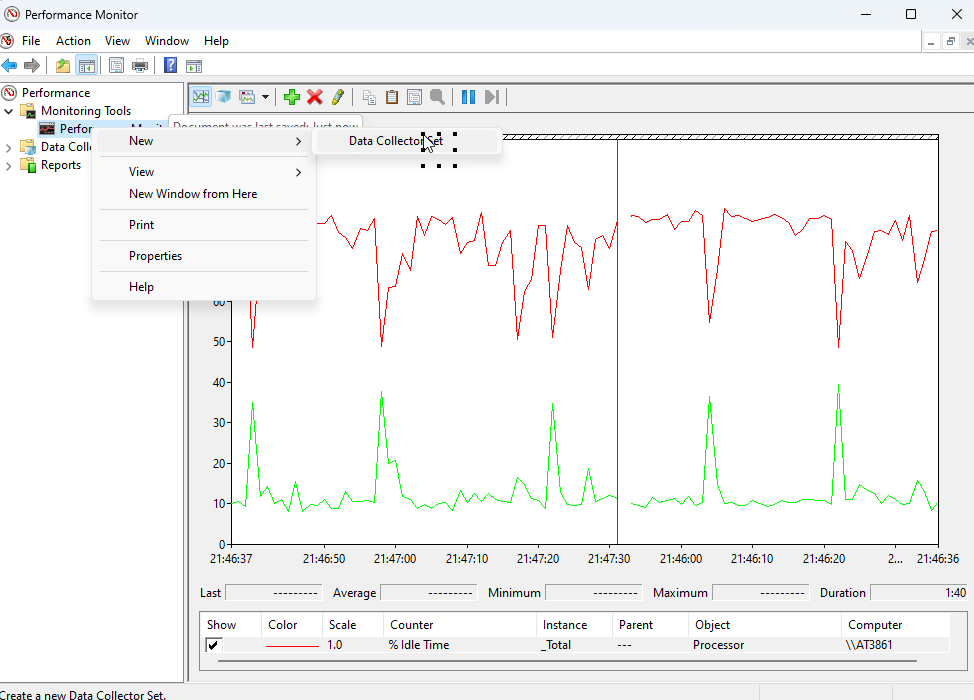

If you select the Performance Monitor sidebar option, you will be presented with a Graph View which will normally have one counter selected.

If you select the Green cross at the top of the window an Add Counters dialog appears.

In the top scrollable list, you get a list of counters to add, if you find Processor and click on the expand arrow you can see a set of counters to add.

From here you select the ones, that you want, the bottom scrollable list allows you to fine tune your selection.

We will add:

- % Processor Time

- % Idle Time

You can see that the graph gets populated in real time with the counters you have selected.

This is not ideal when it comes to your performance test as firstly the graph cycles so the history is lost and secondly you would have to monitor as your tests are running and are likely to have multiple servers being loaded during your testing.

You can however write these metrics to a file which you can then graph after the test has completed.

Right click on Performance Monitor in the right and select New -> Data Collector Set

Follow the set-up steps giving the data set a name and location and select Finish.

For the purposes of this post I have called the data collector set ‘OctoPerf_Test’.

If you expand the Data Collection Sets folder and expand the User Defined folder you will find your Data Collector Set.

Right-click on your collector and select Start, the metrics are now being written to the folder you defined when setting this up.

Right-click and select Stop once your test is completed, you can then graph and analyse the data once your test completes.

Alternative tools¶

If you are using Linux or one of its variants, then there are a few alternative tools at your disposal to measure CPU.

Examples of some of these are:

- top

- mpstat

- sar

- iostat

Example of their basic usage can be found here.

top on Linux¶

For the purposes of this post, we will focus on top, a good guide can be found here.

From a command prompt enter top.

If you press 1 on your keyboard you can see the CPU metrics for each Core on your application server.

We can now see the CPU consumption but as we stated in the Perfmon section above this is not particularly useful if you are running a performance test and want to capture the metrics across the whole test.

To get around this we can output our results to a file, we do this by using this command:

top -d 5 -n 2 -b > top.out

- Basically the -d 5 option tells the

topcommand to refresh every 5 seconds and you can change this to be whatever you want. - The -n 2 option tells top how many times you want to capture a snapshot of the metrics and -b runs top in batch mode so we can the re-direct the output to a file which we have called top.txt.

All you need to do to determine the values for the -d and -n options is to decide on a suitable metric for -d which maybe every minute or every second, this probably depends on the duration of your test.

If you have an hour-long test then once a minute is probably ok, if you have a Soak Test that may run for 24 hours then every 10 mins may be ok, ultimately it’s up to you as you will be analysing the data.

Once you have settled on a value for -d then -n is calculated by dividing the duration of your test by the value for -d.

For example an hour-long test is:

3600 seconds / 60 seconds (this is our -d values) = 60 (this is our -n value)

If we run our original command:

top -d 5 -n 2 -b > top.out

We will see a file called top.out on our filesystem which contains the data that you can then use to graph your CPU usage once the performance tests complete.

OctoPerf also provides its own monitoring layer.

If under load you are consuming a significant amount of CPU then this is going to affect performance of your application.

If you are testing on a cloud-based platform then increasing CPU is normally very easy and can be done almost instantaneously whereas Physical hardware will be more of a challenge.

Memory¶

Memory is also one of the more obvious places to look for the root cause of performance issues. Memory is easy to monitor for the same reasons as we discussed in CPU.

Perfmon for windows and top for Linux can again be used to monitor the memory usage.

The top command will be identical to the one we just used for CPU as both pieces of information are available from this command.

There are however other ways to specifically monitor memory consumption on Linux with an example being /proc/meminfo.

See here for more information on this.

For Windows you will need to select the specific Perfmon counters the same way that we did for CPU.

In the top scrollable list, you get a list of counters to add, if you find Memory and click on the expand arrow you can see a set of counters to add.

From here you select the ones, that you want, the bottom scrollable list allows you to fine tune your selection.

We will add:

- Available Bytes

- % Committed Bytes In Use

Our graph is being populated.

Most application will reside in Memory anyway once started and if the application code handles the destruction of objects, it does not need then you would not expect Memory consumption to grow during a load test.

If, however you see an upward trend of consumption this will lead to performance issues as you start to reach your Memory limits.

Cloud based platforms will again allow the increase of Memory for your platform easily while again Physical hardware is more of a challenge.

Garbage Collection¶

This is linked to Memory and is described in Wikipedia as:

In computer science, garbage collection (GC) is a form of automatic memory management. The garbage collector attempts to reclaim memory, which was allocated by the program, but is no longer referenced; such memory is called garbage. The Garbage Collection process cleans unreferenced objects from Memory and is normally set to run when memory consumption reaches a threshold which is normally when a certain percentage of memory is consumed.

You may be wondering why Garbage Collection may result in your application under test performing badly and the answer is that Garbage Collection consumes application resources to run so if you have excessive amounts of Garbage Collection activity this will have an impact on performance.

Therefore, if you see excessive Garbage Collection and it is impacting performance then the application code needs to be reviewed to see where better object lifetime management can be introduced.

You can monitor Garbage Collection of your Java applications in a number of ways, there is a good source located here.

For the purposes of this post, we will look at jstat.

jstat¶

The first thing we need to do is to find the Process ID (PID) of the Java application that we want to monitor.

To find the PID of your application under test run this from the applications command line.

ps -ef | grep java

This will output information on all the Java applications currently running.

The PID is in the second column.

This is an example from a test machine.

We will use the PID 22799 as this is our test application.

To view the application Garbage Collection, use this command

jstat -gc -t 22779 10000 30

- -gc: garbage collection related statistics will be printed

- -t: timestamp since JVM was started will be printed

- 22779: target JVM process Id

- 10000: statistics will be printed every 10,000 milliseconds (i.e. 10 seconds).

- 30: statistics will be printed for 30 iterations.

As we did for top earlier in this post we can calculate a sensible interval and number of iterations based on the duration of the performance test.

The above option will cause the JVM to print metrics for 300 seconds (10 seconds x 30 iterations).

Here is example of the output.

To redirect the output to a file which will be a much better option during your testing you can simply issue this command.

jstat -gc -t 22779 10000 30 > gc.out

Where the output will be written to a file called gc.out.

The process of determining whether Garbage Collection is being effective or not and whether it requires tuning is outside the scope of this post, there is a guide to understanding the output here.

Horizontal Scaling vs Vertical Scaling¶

Each application is unique and will not necessarily react to increases in Memory or CPU in the same way.

We have spoken above about increasing CPU and/or Memory in our applications to resolve performance issues.

- Some applications do not react well to adding more CPU or Memory, which is known as Vertical scaling, to an application to solve performance issues.

- Some applications require more load balanced instances of an application, known as Horizontal scaling, with the same CPU and Memory footprint.

So, this is not really a thing to consider if you find performance issues but more of something to consider if adding more resources to your hardware does not seem to make any difference.

Load Balancer¶

If your application sits behind a Load Balancer, then you need to make sure that this part of the technology stack is not the cause of your performance issues. A Load Balancer may again suffer from CPU and Memory issues, and these should be checked.

It's possible that your application uses sticky sessions where each request from a user based on its IP address is routed to a particular server.

If you are performance testing from a load injector it is possible that all your requests from JMeter are being sent from the same IP address and therefore all being directed to a single application server and not balanced across your whole estate.

To ensure you inject load from multiple IP addresses in JMeter make sure you have set up you network adapter on the load injector correctly, see here.

And you need to configure your requests to use these IP addresses in JMeter by using a variable in the Source address field of your request.

On the Load Balancer it is always worth check the distribution policies and check that during your tests that there was an even distribution across all servers that are being load balanced as it may be that the policy that has been set up is incorrect.

3rd Party application¶

If your application requires on services that are hosted outside-of your estate then this may be the cause of your performance issue and it maybe one that will not happen in production.

It is more than likely that a 3rd party will have a test environment that is not scaled as production to support your testing so while you are running performance tests against hardware correctly sized any 3rd party requests are the cause of the bottleneck.

You cannot be expected to be responsible for performance of 3rd party software and will have to assume that they can support your predicted production volumes and respond within your requirements. This will have to be part of the agreement with the 3rd party.

The best way to resolve this is to stub external services to ensure that they respond in line with your requirements.

Network latency¶

Your company network is finite in terms of its bandwidth and it's more than likely that there is some priority or bandwidth reservation given to production traffic over the non-production traffic.

When running load test it is possible that if the network is being heavily utilised through development commits or other testing or general usage then this may be the cause of poor performance.

This might not account for all performance related issues, but it may contribute to some.

It is good practise to run your tests overnight, away from batch windows, to ensure that the network is consistent.

If you are concerned that network latency is the cause of your performance issues then there are many tools that can provide you with this information.

An example on the windows platform is tracert.

There is a good source of information on this here.

tracert on Windows¶

If we open a command prompt and enter tracert www.octoperf.com we can see information about the route taken to resolve the address.

You can use this on your internal networks to trace your network requests to your application servers and determine if latency may be an issue.

Database Queries¶

Poorly performing database queries are a very common cause of application performance and these can normally be resolved quickly with just the addition of an index to a table.

It may be more complex but may highlight an inefficient way the database schema has been designed which may require a change.

All database technologies come with the ability to determine if SQL is efficient and performing well or not and many will make recommendations on where improvements can be found.

There are to many flavours of database technology to consider all of them in this post but working with your DBA to look at the logging during your tests should be the first step.

It is worth noting that database queries do take longer, if not correctly indexed or designed, as your data volumes grow. If you are only using small amounts of data in you database tables you may not uncover performance issues until you reach production and the data volumes grow.

It is always worth remembering that you should try and test with production indicative data volumes where possible and if you are unable to seed this data you should make sure you do not truncate it between performance tests execution cycles, so it starts to grow naturally.

Database Pool Size¶

Another database related consideration if you are seeing slow performance and you are struggling to pinpoint the root cause is the database pool size.

Databases allow concurrent requests by managing available connection in a pool and often many databases are left at their default values.

If you have a high level of concurrency in your performance tests, then it may be that a request is waiting for a connection to be made available before executing a query.

On the surface your database queries might seem quick, but you need to be able to monitor the pool size of the database as your test run.

Each database technology will provide a way of monitoring this pool for active and inactive connection and if you see there are no inactive instances during your tests then you can easily increase this pool and re-test.

Message queues¶

Many applications use message queues for the publishing and subscribing to messages being distributed between application components.

Message queues are processed sequentially and therefore if the time taken for message to be consumed is greater than the rate at which they arrive then you will get a bottleneck.

Message queue depth can be monitored in real time using the interface provided by the queue technology you are using, and you should use this during your performance tests to monitor ingress and egress of the message to see if they match and do not significantly grow.

The monitoring of these queues is a bit of a manual process as integrating with the queue sizes is a security risk and doing this from outside trusted applications is not always easy. Most message queue technology will have some form of interface and therefore if you believe that it may be the message queues that are causing your performance issues you may need to manually check the depth of these queues during your performance testing.

This is an example of the Red Hat Active Message Queue interface.

If we select the message queue we are interested in and select the Operations tab

We can see a countMessages() operations that will give us the number of messages in the queue.

If you were to run this periodically during your test and monitor the size you would be able to tell if the queue was growing or not.

This is an example of the Red Hat interface, but all providers of message queue technology will provide something similar.

Load Injector¶

This is not one that is always considered but sometimes the performance test results are being impacted by the load injector not having enough resources.

Just as you always ensure that the environment you are testing against is sized as production you should also ensure that your load injector is able to support the number of threads your test requires.

If your load injector is not sized correctly then requests will wait to be sent or responses will be queued giving the impression of you application not responding quick enough when it's actually your tooling.

We can see from the example below that the majority of the time for the transaction request is wait time with the database activity being very quick. This indicates that there may be an issue with the way the load is being delivered and the bottleneck is not necessary the application under test.

Using a tool like OctoPerf allows you to have automatic monitoring of the load generators.

Lighthouse¶

Lighthouse is defined here as:

Lighthouse is an open-source, automated tool for improving the quality of web pages. You can run it against any web page, public or requiring authentication. It has audits for performance, accessibility, progressive web apps, SEO, and more.

You can run Lighthouse in Chrome DevTools, from the command line, or as a Node module. You give Lighthouse a URL to audit, it runs a series of audits against the page, and then it generates a report on how well the page did.

From there, use the failing audits as indicators on how to improve the page.

Each audit has a reference doc explaining why the audit is important, as well as how to fix it.

This has been added to consider the fact that sometimes it’s the UI that’s slow and the process of rendering objects on the screen and not the application back-end technology at all.

Clearly Lighthouse is for web-based applications but for the purposes of this document it is also important to consider user experience on loading pages and considering whether improvements can be made to the order the page is loaded and making it as asynchronous as possible.

Conclusion¶

There are just observation of places to consider when tracing performance issues against your application. We hope these insights are helpful as you performance test your applications.