What to do with my results

Since I started my career as a tester, I'm often impressed by how disregarded communication in load and performance testing is. However, you can be as skilled as you want as a technical tester, if you can't explain your analysis and convince decision makers to take actions, most of your work was in vain. In fact, I've seen colleagues who were not interested in the technical aspects of the job get better customer satisfaction. The good thing is, communication is just a skill amongst others that you can train.

Today I would like to share everything I learned about presenting results.

Once upon a time in my load test campaign¶

In my opinion, you should always come up with a short story describing your load test campaign. Rest assured, it does not have to include fairies and princesses, the truth will do just fine. It allows everyone to understand quickly what happened during the campaign. And it's pretty obvious that a good story always sells better.

The first step to achieve that is to have good news to tell. Unless your test campaign is a complete train wreck, you should at least have made some progress toward understanding how to get better performance (or whatever your precise objective is). So try to build your conclusion as a story on how, test after test you got closer and closer to the performance objective.

For example:

"After the first standard test, we noticed high response times and a light load on the servers. For that reason, the next test was conducted without the firewall. It helped us assess that the firewall network card was overloaded..."

You can later provide a detailed analysis of each test but that way you quickly introduced the issue(s) and solution(s) as well as why you probably had to change your test strategy.

Make it readable¶

A common mistake when writing down your analysis is often to stick to large blocks of text. Obviously this does not make your analysis any easier to read. Instead, try to split as much as you can and use graphs and tables whenever you can to illustrate what you say.

Of course at some point you will have to explain what happened. It is best if you keep it short and only talk about the facts. I learned to stick to short sentences because I often had to write my reports in a foreign language. But short sentences ensure a smaller risk of misunderstanding even in your native language. Keep in mind that your objective is to be understood not to get the Pulitzer.

Who's my public¶

To know how to format your results, take into account who you are addressing:

- Decision makers and/or stakeholders: Make a slideshow with minimal technical information. Don't overlook the importance of graphs in this document but don't overdo it, a couple should be fine.

- Technical guys: Prepare a document showing the detailed results of each test along with your analysis/interpretation.

Most often than not, you will find yourself having to address both. In that case you could go for a slideshow and a document, but unless you have planned a result presentation meeting, the slideshow might not be required. I usually go only for a complete document describing all the tests in details but to make it easy to read there are some rules I follow.

Start by concluding¶

I have seen a lot of test reports structured like this :

- Chapter 1: Test 1

- Chapter 2: Test 2

- Chapter 3: Test 3

- Etc...

The first issue I see here is that to get to the point you will probably have to go through 10 to 50 pages of graphs. Let's be honest, most readers won't bother.

That's why I've always been told to conclude first. Like we said earlier, thanks to that, even people not interested in the details can get the information they want in a couple of minutes.

So I would instead recommend a plan looking like this:

- Context: Why are we running these tests.

- Global conclusion: This includes the little story we talked about earlier but also each test detailed conclusion.

- Recommendations: A short list of what should be done to improve performance.

- Problem(s) encountered: List of problems to avoid in the future.

- List of tests: Here you list each test launched and explain why some of them won't be presented because of errors, or if they weren't relevant.

- Test 1

- Test 2

- etc ...

- Appendix: To explain the technical terms to a non tester.

That way you increase your chances to pass your message even to people who won't spend more than 5 minutes reading your document.

The recommendations section should contain the list of actions you advise to ensure the best performances. If you ran out of time you can suggest an additional load test here.

The problems encountered section is not about giving names, but more about documenting your difficulties. It should help you:

- Explain why you ran out of time/reduced the scope.

- Understand where you can do better next time as a tester.

- Anticipate more the identified pain points.

It will also be beneficial if anyone else has to work on the next campaign, to get them up to speed quickly.

The don't list¶

Let's now speak about what you should not be doing when presenting results. You might find some of these obvious, but every time I look at a results presentation I find a couple of these mistakes.

I must admit that as a beginner I would have loved to have this list. And I still find myself making some of these mistakes from time to time.

-

Never talk about "good performance", "resources are ok" or anything along these lines. First, to whom is the performance good? You? Your cat? You are not the judge of good performance here, you are merely discussing what you measured. Performance can be "as expected" if you defined an SLA with your customer. It can be "better" or "worse" after some optimization, but avoid good and bad as a general rule. If you have no SLA defined, then don't judge, just give the measures with no comment.

-

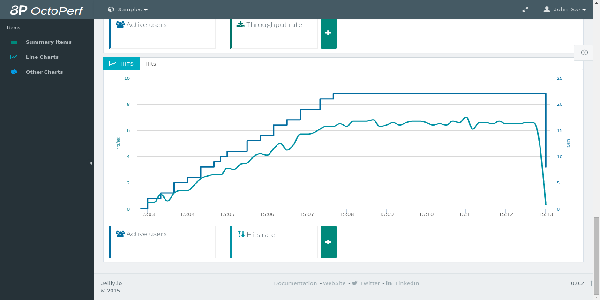

A good graph will illustrate your document nicely, but:

- Make sure the text and scale are readable.

- Graphs showing percents (%) should show values between 0 and 100, not between 0 and the maximum value reached. Otherwise the reader might think something is wrong when it's not.

- A graph should always have a title, a legend and a comment. Your added value is analysis, so don't copy paste tons of graphs without any comments under.

- Curves showing the same types of values should have the same scale (ex: CPU of several servers) otherwise readers will misinterpret.

- Color codes are important. Red always refer to an error or a problem, don't put the http errors graph in green. Also try to use different colors so that the reader can relate to the graph legend (you can even use discontinued lines for colorblind people).

- If there is a spike on the graph, you have to explain it. No exceptions. It is often this way that problems are discovered.

-

Including a huge table of response times for every step of your user profiles is fine, but as for anything you include in your report, you must analyse it. List the worst values, the values over any SLA and try to give an overall feeling (percentage of difference) of the response times. Ideally, use a color code (based on SLA) in the table for a better readability.

-

Avoid irrelevant information. Copy-pasting 20 graphs showing that everything is fine is not interesting. Even worse, the reader will start paying less attention and might miss critical information. Instead, focus on relevant graphs showing problems. You can always put all the others in appendixes.

In short?¶

- Think about who you are addressing to: This will tell you where to put the emphasis.

- Conclude first: This ensures most readers will understand your conclusion.

- Make it easy to read: Tell your campaign like a story. Keep your sentences short and focused on facts.