Kafka Load Testing with JMeter

This post is about Kafka and the process I have been through recently writing a performance test for an application that subscribes to messages from this technology.

The test I ended up with was in the end very straightforward but there were several hurdles that took a while to resolve. I hope that reading this post will hopefully help you avoid them.

The performance testing concepts we will discuss are focused on how to publish messages onto a Kafka topic and will not discuss how to write a test to consume messages from Kafka. We wanted to focus first on some of the concepts of adding messages and we will look at the consumption in a later post.

If you are performance testing an application that subscribes to a Kafka topic then the consumption of messages will be performed by the application you are testing, and therefore you will not need a consumer test.

You will mostly be performance testing an application that consumes from Kafka and would probably only want a consumer if you were testing your implementation of Kafka.

Before we start let’s take a high level look at what Kafka is and how it works.

Kafka is a publish/subscribe messaging system which is ideal for high volume message transfer and consists of Publishing (writing) messages to a Topic and Subscribing (reading) data from a Topic.

From the Kafka documentation:

Producers are those client applications that publish (write) events to Kafka, and consumers are those that subscribe to (read and process) these events. In Kafka, producers and consumers are fully decoupled and agnostic of each other, which is a key design element to achieve the high scalability that Kafka is known for. For example, producers never need to wait for consumers. Kafka provides various guarantees such as the ability to process events exactly-once.

Events are organized and durably stored in topics. Very simplified, a topic is similar to a folder in a filesystem, and the events are the files in that folder. An example topic name could be "payments". Topics in Kafka are always multi-producer and multi-subscriber: a topic can have zero, one, or many producers that write events to it, as well as zero, one, or many consumers that subscribe to these events.

JMeter plugins¶

There are several plugins that support the testing of Kafka and whilst none of these allowed me to write a test for my particular requirements, as in the end I used a JSR223 Sampler which I will detail later, it is worth looking at the options that are available.

Pepper-Box¶

Pepper-Box is a JMeter plugin and a very good one, you build the pepper-box JAR file from the source found here and copy the JAR file to your JMeter lib/ext folder.

Once installed you have a couple of Config Elements and a Sampler and it does work very well and is easy and intuitive to use.

The reason I did not end up using this solution is because I was unable to add Headers to my Kafka messages, if you have a requirement to add Header-less messages to Kafka then this is a really good solution.

KLoadgen¶

KLoadgen is again a very good JMeter Plugin which again requires you to build a JAR file from source and is designed to work with AVRO Schema Registries.

Once installed you again are provided with Config element and Samplers to support testing Kafka and while KLoadgen does support Headers the solution I was looking to test was not reliant on a Schema Registry.

Others¶

There are other Plugins available if you look and while I did not pursue any others because I decided to build my own test there is no reason that you cannot find a suitable solution that has already been developed.

Simple Kafka Test¶

If you want to build a performance test for publishing messages to Kafka it is really straightforward to use the kafka-clients JAR file and script your message producer in a JSR223 Sampler.

I will take you through the simple test that I created which may help you if you need to produce something similar.

Issue During Test Creation¶

Before we start I encountered a problem when I was trying to create an instance of the ProducerRecord that is effectively the payload sent to the Kafka Topic, looking at the ProducerRecord class overview Plugin’s.

You can see that there are six constructors for the class:

ProducerRecord(java.lang.String topic, java.lang.Integer partition, K key, V value)

ProducerRecord(java.lang.String topic, java.lang.Integer partition, K key, V value, java.lang.Iterable<Header> headers)

ProducerRecord(java.lang.String topic, java.lang.Integer partition, java.lang.Long timestamp, K key, V value)

ProducerRecord(java.lang.String topic, java.lang.Integer partition, java.lang.Long timestamp, K key, V value, java.lang.Iterable<Header> headers)

ProducerRecord(java.lang.String topic, K key, V value)

ProducerRecord(java.lang.String topic, V value)

I needed to use one of the constructors that allowed me to pass in a header and because the Topic I was publishing messages on required a Timestamp I was using:

ProducerRecord(java.lang.String topic, java.lang.Integer partition, java.lang.Long timestamp, K key, V value, java.lang.Iterable<Header> headers)

No matter what I did I was unable to construct an Instance of this Class even though I was passing the correct parameters, I was able to construct an instance for all the others that did not have a java.lang.Iterable<Header> headers

Which bearing in mind that including a header was the main reason for using the kafka-clients JAR was a bit confusing.

The problem was that because I still had the pepper-box JAR in my lib/ext directory under JMeter it was not allowing me to construct an ProducerRecord instance with headers which was probably due to there being a reference to an early version of the kafka-client internal to pepper-box but as soon as I removed the pepper-box JAR there was no issue with creating an instance that allowed headers.

I would suggest that is you do delete the pepper-box jar from your JMeter instance before attempting to build a test of your own should you wish to do so.

JSR223 Sampler¶

Before we look at the sampler we need to make sure we have the kafka-clients-2.6.0.jar in our JMeter classpath, the simplest way is to add to your jmeter/lib folder. The kafka client is available to download here.

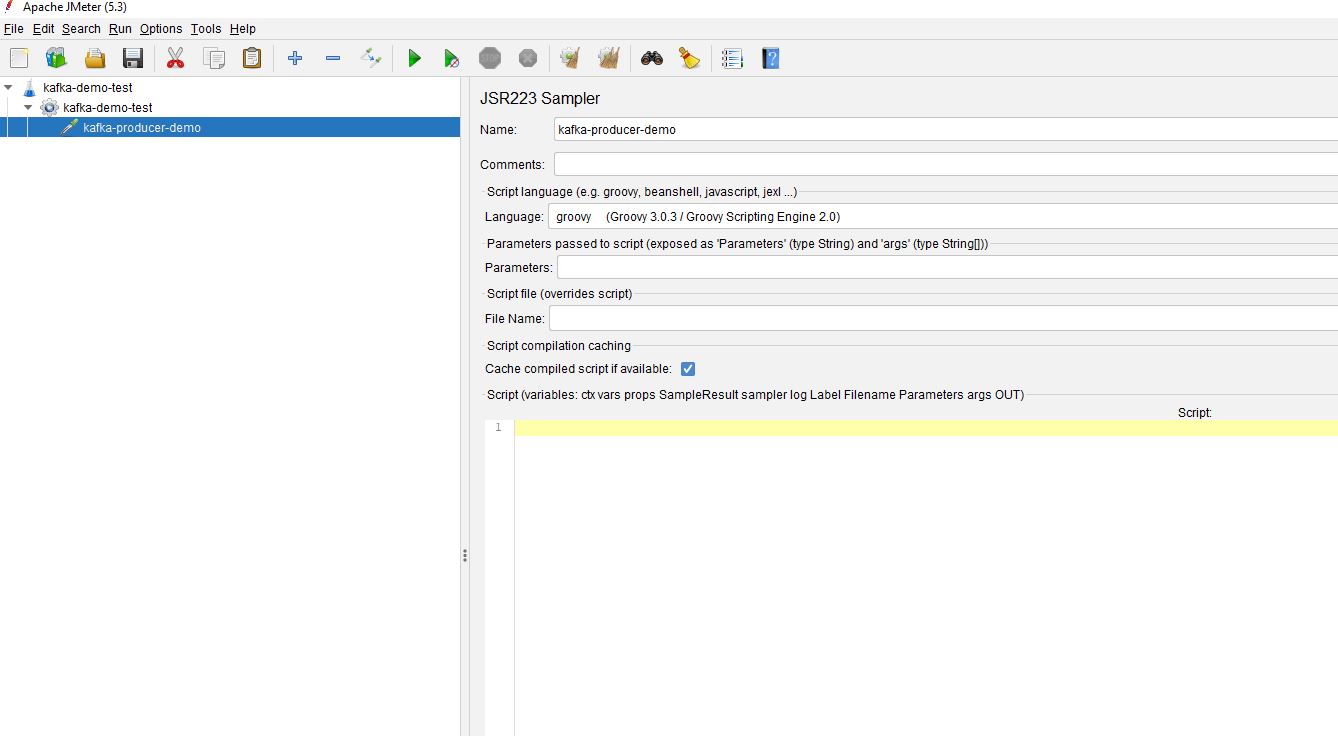

To demonstrate how this works I will create a simple test with a Test Plan, Thread Group and a JSR223 Sampler.

The first thing we need to do is to import a number of Kafka client libraries, so we add this to our JSR223 sampler:

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.common.header.Header;

import org.apache.kafka.common.header.internals.RecordHeader;

import org.apache.kafka.common.header.Headers;

import org.apache.kafka.common.header.internals.RecordHeaders;

Connection Properties¶

The next thing we need to do is to set up our connection properties, the ones required for the Kafka Topic I was testing are below which I believe are the ones most common when establishing a connection. There are others, which are documented here.

You may need to talk with your development teams to get a list for the Kafka Topic you wish to test along with appropriate values.

These are how the connection properties need to be configured in our JSR223 Sampler.

Properties props = new Properties();

props.put("zookeeper.connect", "<server-name>:<port>,<server-name>:<port>");

props.put("bootstrap.servers", "<server-name>:<port>,<server-name>:<port>");

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("compression.type", "none");

props.put("batch.size", "16384");

props.put("linger.ms", "0");

props.put("buffer.memory", "33554432");

props.put("acks", "1");

props.put("send.buffer.bytes", "131072");

props.put("receive.buffer.bytes", "32768");

props.put("security.protocol", "SSL");

props.put("sasl.kerberos.service.name", "kafka");

props.put("sasl.mechanism", "GSSAPI");

props.put("ssl.key.password", "<ssl-key-password>");

props.put("ssl.keystore.location", "<keystore-location>");

props.put("ssl.keystore.password", "<keystore-password>");

props.put("ssl.keystore.type", "JKS");

props.put("ssl.truststore.location", "<truststore-location>");

props.put("ssl.truststore.password", "<truststore-password>");

props.put("ssl.truststore.type", "JKS");

Many of the above are left at their default values which may be suitable for your testing there are some that are worth looking at in more detail.

props.put("zookeeper.connect", "<server-name>:<port>,<server-name>:<port>");

props.put("bootstrap.servers", "<server-name>:<port>,<server-name>:<port>");

These values are the servers upon which the queues are running, some configurations require both and some one or the other, as mentioned before speak with your development teams if you are unsure, there can be multiple servers that are comma separated in the format server-name:port.

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

When we look at the Producer Record we will send to the Kafka Topic later in this post there is a key and value as part of the constructor, these values ensure the connection knows what type of data will be sent for the key and the value.

In our configuration we are sending String values for both, but you could easily send the message value as JSON for example using

props.put("value.serializer", " org.apache.kafka.common.serialization.JsonSerializer");

The structure of the key and value need to match what the Topic is expecting.

props.put("ssl.key.password", "<ssl-key-password>");

props.put("ssl.keystore.location", "<keystore-location>");

props.put("ssl.keystore.password", "<keystore-password>");

props.put("ssl.keystore.type", "JKS");

props.put("ssl.truststore.location", "<truststore-location>");

props.put("ssl.truststore.password", "<truststore-password>");

props.put("ssl.truststore.type", "JKS");

The last set of connection properties are how you authenticate on the Topic using a Java Keystore and Truststore is a common approach, your set-up may be different but whatever it is it will need to be included.

Kafka Producer & Producer Record¶

We can now create the connection to Kafka which we do with this line:

KafkaProducer<String, String> producer = new KafkaProducer<String, String>(props);

We create a KafkaProducer and pass the properties we set up and assigned to the props variable.

We now have a mechanism to pass a message to a Kafka Topic and we will look at this next.

As we spoke about earlier in this example we need to pass our message as a String as that is what the connection is expecting and is in line with the Topic we are sending the message to, yours may be different if your Topic is configured to accept a message in an alternative format.

For the purposes of this test we will create a simple string:

String message = "Hello World";

We now configure any Headers we want to send with our message:

List<Header> headers = new ArrayList<Header>();

headers.add(new RecordHeader("schemaVersion", "1.0".getBytes()));

headers.add(new RecordHeader("messageType","OCTOPERF_TEST_MESSAGE".getBytes()));

Each header is added to an array that is Iterable and in our example we have added 2 headers, as with all these values they need to match what is expected by your Kafka Topic.

The header values need to be converted to bytes before adding to the array.

We can now build the record we will send to our Kafka Topic

Date latestdate = new Date();

ProducerRecord<String, String> producerRecord = new ProducerRecord<String, String>("kafka-topic-name", partition-integer, latestdate.getTime(), "key", message, headers);

If we remember our constructors we spoke about in an earlier section we were going to use this one as it matches what our Kafka Topic expects in terms of data:

ProducerRecord(java.lang.String topic, java.lang.Integer partition, java.lang.Long timestamp, K key, V value, java.lang.Iterable<Header> headers)

Let’s look at each argument in turn:

- kafka-topic-name - this Sting is the name of the Kafka Topic you are targeting.

- partition-integer - Kafka Topics are divided into partitions to allow parallel processing, you set the partition you want here.

- latestdate.getTime() - the constructor requires a timestamp.

- key - this is required and needs to be in the format we discussed in the properties section. What the value needs to be depends on your organisation’s implementation of the Kafka Topic.

- message - this is the message we defined earlier and, as with the key value, needs to be formatted as defined in the properties section.

- headers - these are the headers we set up in the previous section.

Send Message & Tidy¶

All that leaves us to so is send the message and tidy up the connection which we do using:

producer.send(producerRecord);

producer.close();

And that is it, your message and headers posted to your Kafka Topic.

All you need to do is build the load profile around the JSR223 Sampler and you have a performance test. You may want to move the connection and close part of the sampler to outside of any message iterations to avoid constantly connecting and closing as this may not be representative of how your application will Publish messages.

Parameterisation of Message¶

It is worth noting that you are more than likely going to pass random data into your messages or maybe read from a flat file and pass values in to the JSR223 sampler.

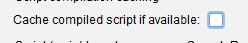

One thing I found when using this technique was that the values of ${..} variables were not being updated as the test iterated and this was because the Cache compiled script if available option was selected, once this was un-checked the variable values were being used.

Alternatively you can leave it on, which will give you better performance and use the vars.get and vars.put syntax or the Parameters field, these two method will make sure the new value is used.

Conclusion¶

Hopefully this will give you some guidance on how you can use JMeter to test Kafka Topics and helps you avoid some of the issues I found when building a test for this technology.