Managing data during performance testing

We are going to talk about data in this blog post, predominately test data required for performance testing.

This is something that makes the life of a performance tester extremely difficult and awkward as because of the huge quantities required, in the right state, that match the criteria required for your test to run.

We often have to approach the use of large quantities data for the purpose of performance testing in a number of ways:

- Use a small subset,

- Ask business users or functional test teams for a set of data, that may become redundant after an execution of your testing because you may alter the state during testing making this data short lived and therefore requests to the business become frequent,

- Restore your database after each test iteration, this may not be possible if the database is shared and is certainly not agile,

- Create your own data before the tests start, this can be long-winded and may require multiple legacy system interaction or overnight batch processes to get the data in the right state.

Fear not, help is at hand from your friends at OctoPerf.

As we have seen above there are a number of valid ways to get data but the one we are going to explore and the one that make our tests reusable and agile is to query the database of the application under test, or any supporting application databases, to retrieve usable data using SQL this way we avoid our defined pitfalls above by:

- Taking as much data as we need from our databases,

- Not needing to ask the business because we have the relevant SQL at our disposal,

- Not needing to restore the database as we just take more of the data we need from our data-sources

- Avoid creating your own data using the user interface.

Ok, we need to caveat this if you have a performance test environment that does not contain a copy of production data, or artificially constructed representative data volumes then this approach will not work but you could argue that performance testing on a system that does not have production quantities of data is not representative so lets assume you do, because I think most will.

Before moving on there is obviously the ability, in JMeter, to create random data that conform to any number of rules (Dates, Random Strings or Integers, Values from Arrays, a value from any number of the JMeter native, or 3rd party, function libraries.

But if you need data that exists in your application under test to either use, update or delete then you need to know the values before testing starts and this is what we are looking at in this post.

Basic Principles¶

Before we get into the detail lets discuss some basic principles around what we are trying to achieve here:

- SetUp - Query the database of your system under test, or any supporting systems, even calling external system API's to get valid data for your tests and write these to flat files,

- Execute the tests using this data,

- TearDown - Delete any unwanted data or data files.

Simple!

Its all about making each test responsible for its own data sets and their creation, using the data we have extracted or created and then destroying it if necessary after the test completes.

The test can then be run as often as is necessary against any test environment with no prior knowledge of available data in the environment because the test takes responsibility for all of this.

Its a further example of shift left performance testing in pipelines where regular execution against multiple environment is a necessity and a benefit and you don't want data to constrain you.

Lets look at each aspect in turn and incorporate a working example.

Example Database Setup¶

In order to demonstrate the data process we are going to create a dummy SQLite database and then create a Dummy Sampler to consume the data from the database which will aid in the demonstration of this data approach.

In order to create our test database we will first install the database by downloading SQLite and uncompressing the binaries.

In a enterprise solution you will be extracting data from alternative database technologies, this does not change the approach only the way you configure your JDBC Connection to them.

Add the SQLite binaries to your PATH and then executed the command

sqlite3 OctoPerf.db

to create a dummy sqlite database.

We then open the database

.open OctoPerf.db

We then create a dummy table

CREATE TABLE OctoPerf_Table_1 (Id Int, Name Varchar);

If we run the

.tables

command we see our empty table.

Lets now populate the table:

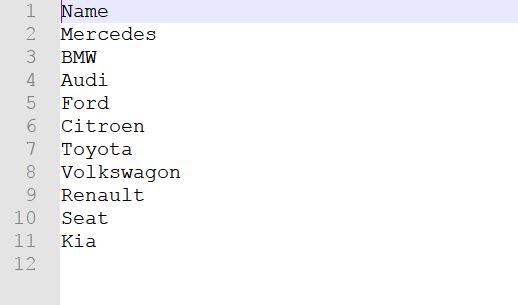

INSERT INTO OctoPerf_Table_1 (Id,Name) VALUES

(1,'Mercedes'),

(2,'BMW'),

(3,'Audi'),

(4,'Ford'),

(5,'Citroen'),

(6,'Toyota'),

(7,'Volkswagon'),

(8,'Renault'),

(9,'Seat'),

(10,'Kia');

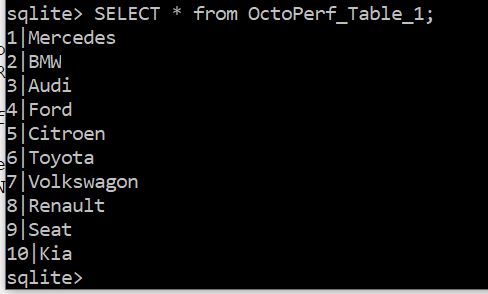

And to check the values we execute:

SELECT * from OctoPerf_Table_1;

Now lets create a second table using the following commands, this time a location for each entry:

CREATE TABLE OctoPerf_Table_2 (Id Int, Table_One_Id Int, Location Varchar);

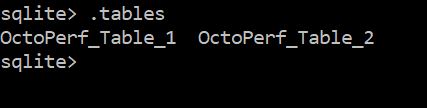

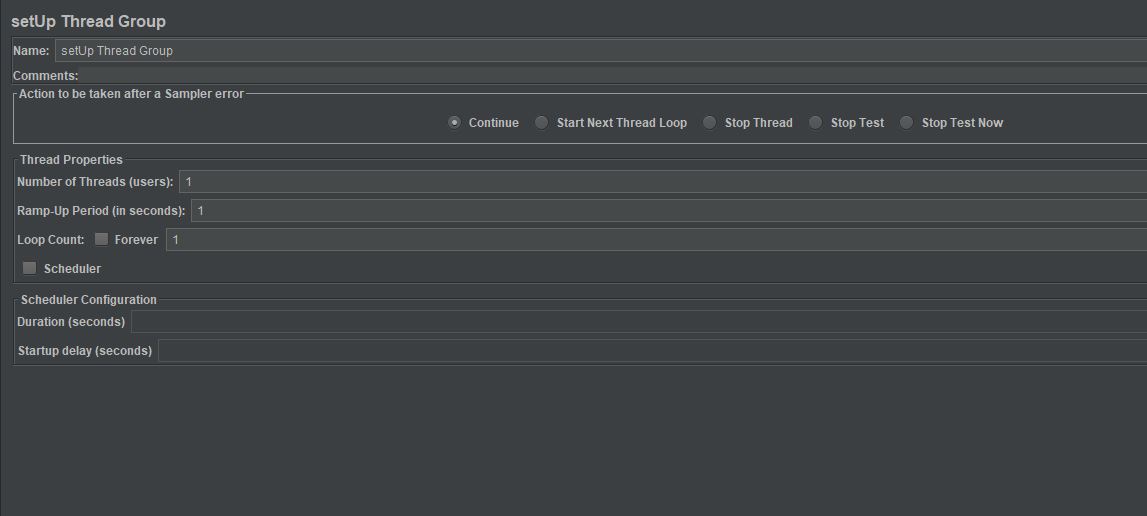

If we run the .tables command we see two tables

Lets now populate this table:

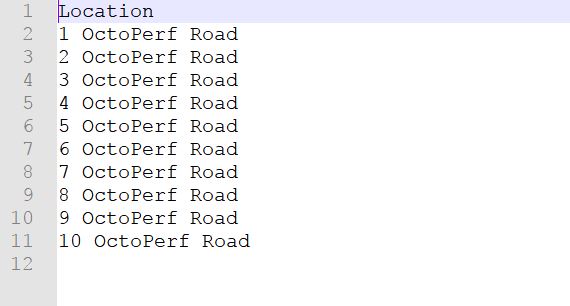

INSERT INTO OctoPerf_Table_2 (Id,Table_One_Id, Location) VALUES

(1, 1,'1 OctoPerf Road'),

(2, 2,'2 OctoPerf Road'),

(3, 3,'3 OctoPerf Road'),

(4, 4,'4 OctoPerf Road'),

(5, 5,'5 OctoPerf Road'),

(6, 6,'6 OctoPerf Road'),

(7, 7,'7 OctoPerf Road'),

(8, 8,'8 OctoPerf Road'),

(9, 9,'9 OctoPerf Road'),

(10, 10,'10 OctoPerf Road');

And to check the values we execute:

SELECT * from OctoPerf_Table_2;

This is enough to get us started, we will deal with the Dummy Sampler creation later

SetUp¶

Ok this is the critical bit, now for the most part .csv files are commonly used as data parameter input into JMeter performance tests, there are numerous alternatives but flat files are:

- well managed,

- easy to share amongst threads and thread groups,

- can be recycled (or not),

- data can be access randomly if required.

This simple Config Element is extremely powerful and flexible and lightweight enough to support even the most complex of tests, you can find a really good Blog Post on the subject by following this link.

By dynamically creating these .csv files will give us significant control over our ability to test repeatedly and consistently, we will therefore use flat files in our example, populated by querying our database.

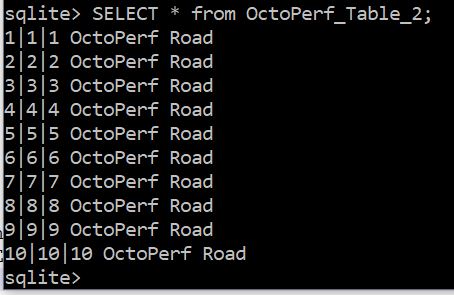

Now there are many ways to approach this with the two most elegant approaches being to either use a setUp Thread Group or create a separate Test Plan for the data creation exercise.

Importantly you want your data creation set-up activity to complete before your tests start as otherwise you may be expecting data to be available to your tests before it has been created.

So whilst both of these approaches are equally as suitable as the other, with the above statement that the data should be available before the test start in mind the approach to use will depend on whether you have multiple Test Plans that run concurrently or a single Test Plan that contains multiple Thread Groups.

If you have multiple Test Plans you will need a separate, isolated, Test Plan for your data creation exercise whereas if you have a single Test Plan with multiple Thread Groups then you can put your data creation in a setUp Thread Group although under these conditions you could do either.

For our example we will use a setUp Thread Group to query our database and create a set of flat files.

Clearly it is important to know what data we need and whilst this example is theoretical and we have not created our Dummy Sampler yet lets assume we need a list of car manufactures and their trading address.

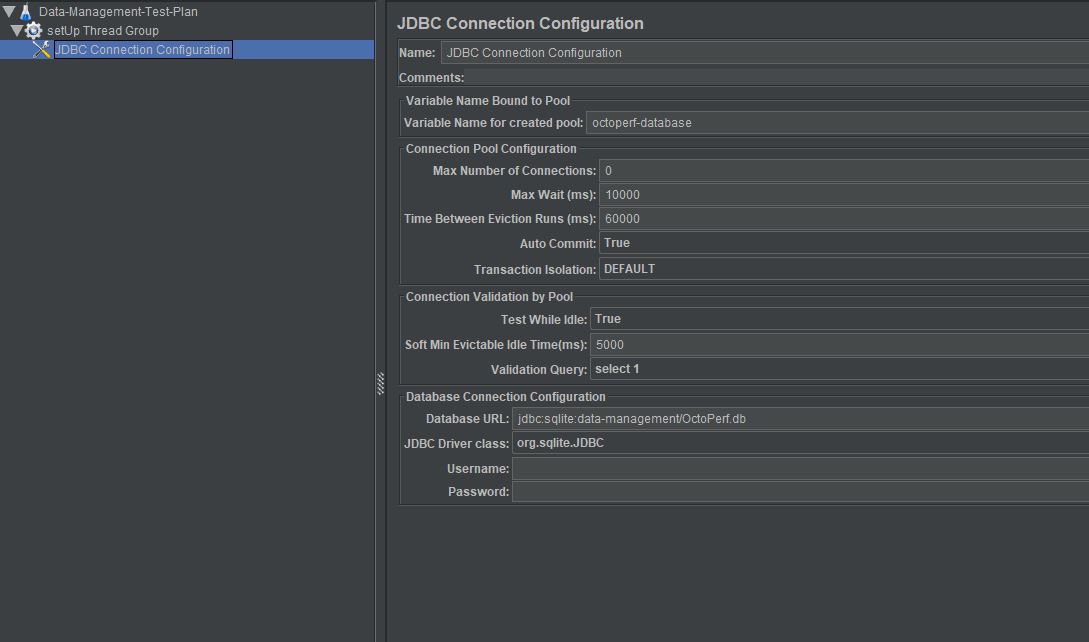

Lets add our JDBC Connection Configuration element, and configure it as so.

You can see we have selected org.sqlite.JDBC as our driver, this will change depending on your database technology as will the syntax of the Database URL which for our example is in the format

jdbc:sqlite: followed by the location of the database file.

Notice we have also created a variable name for the created pool 'octoperf-database' which we will use in the JDBC Request Sampler; talking of which lets add a JDBC Request Sampler.

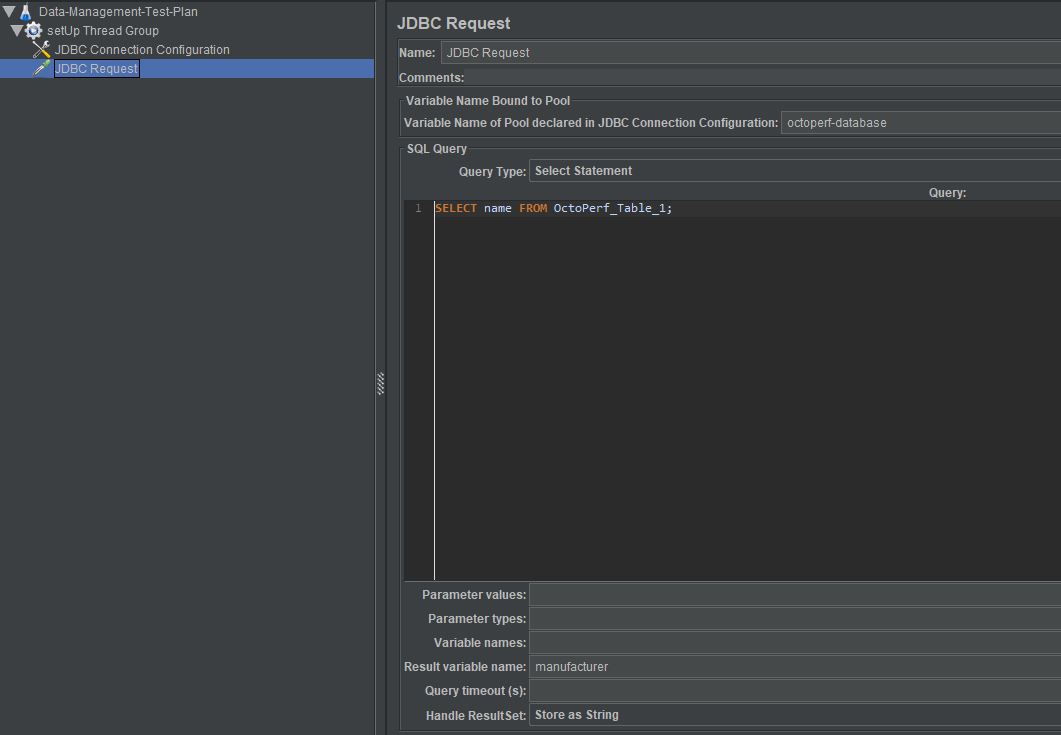

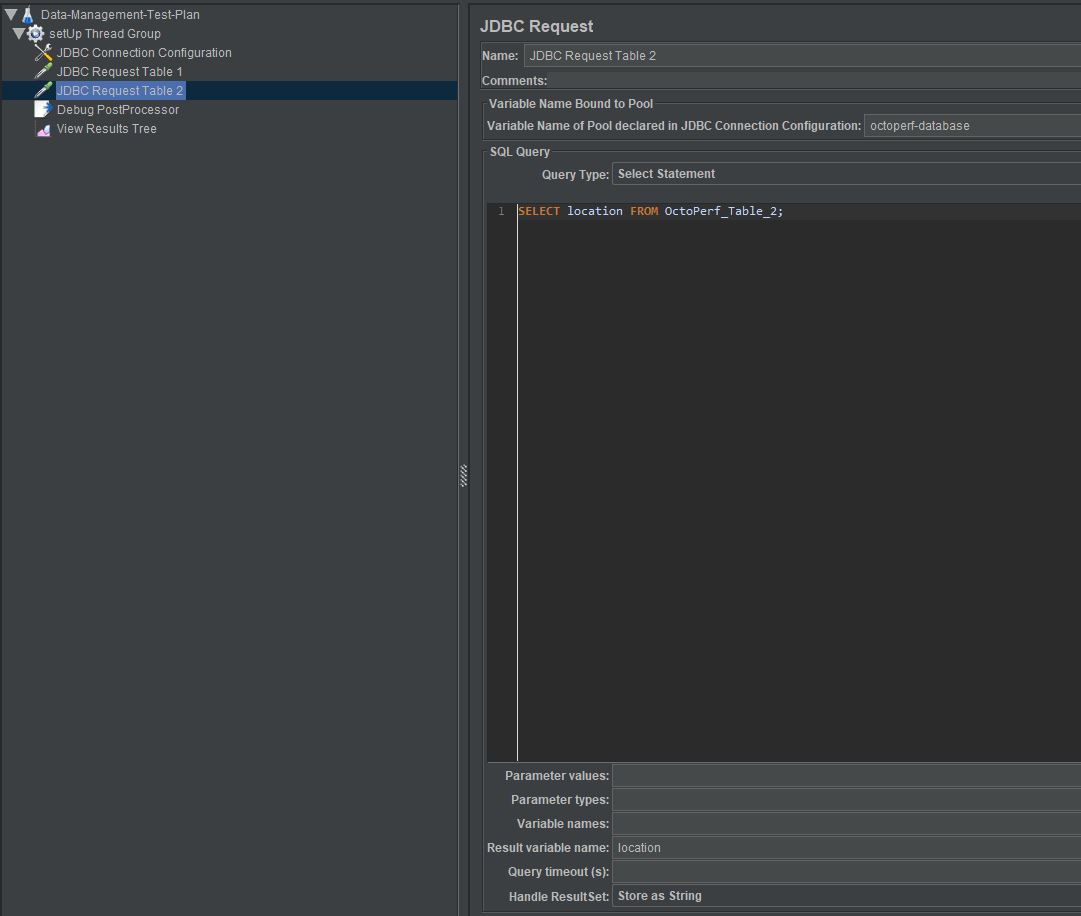

So you can see that we have added our connection pool variable so we use the correct database for our query.

We have a simple SELECT statement, also note the Query Type is SELECT Statement and we are going to store the results in a results object variable called manufacturer.

Simple, lets add a Results Tree Listener and a Debug Post Processor and run the Test Plan.

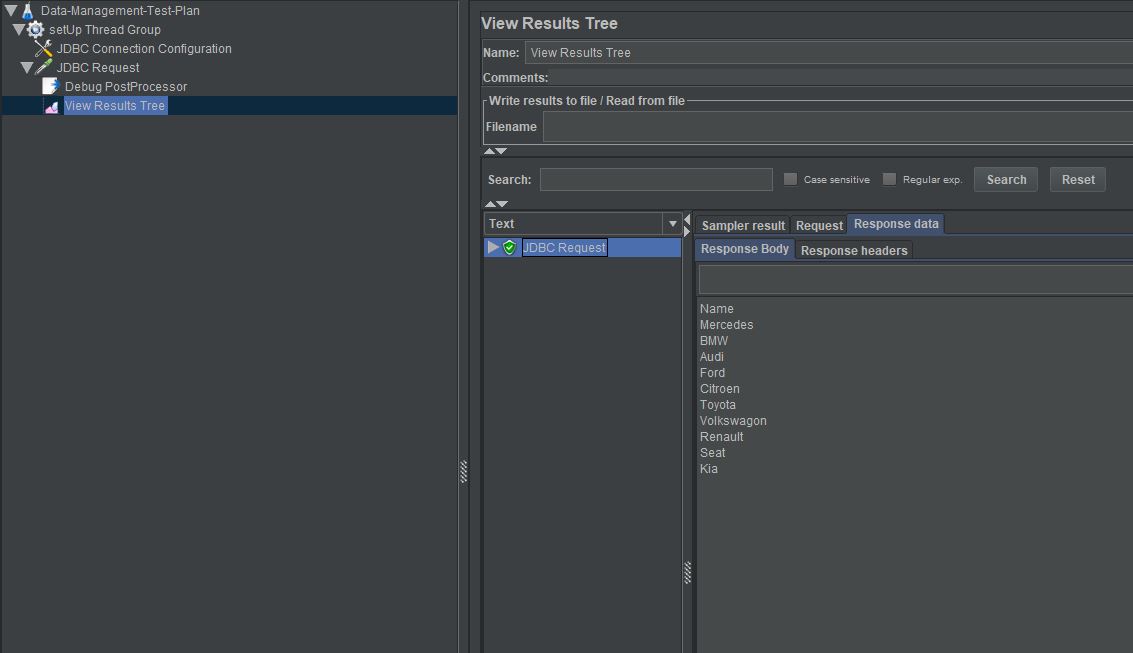

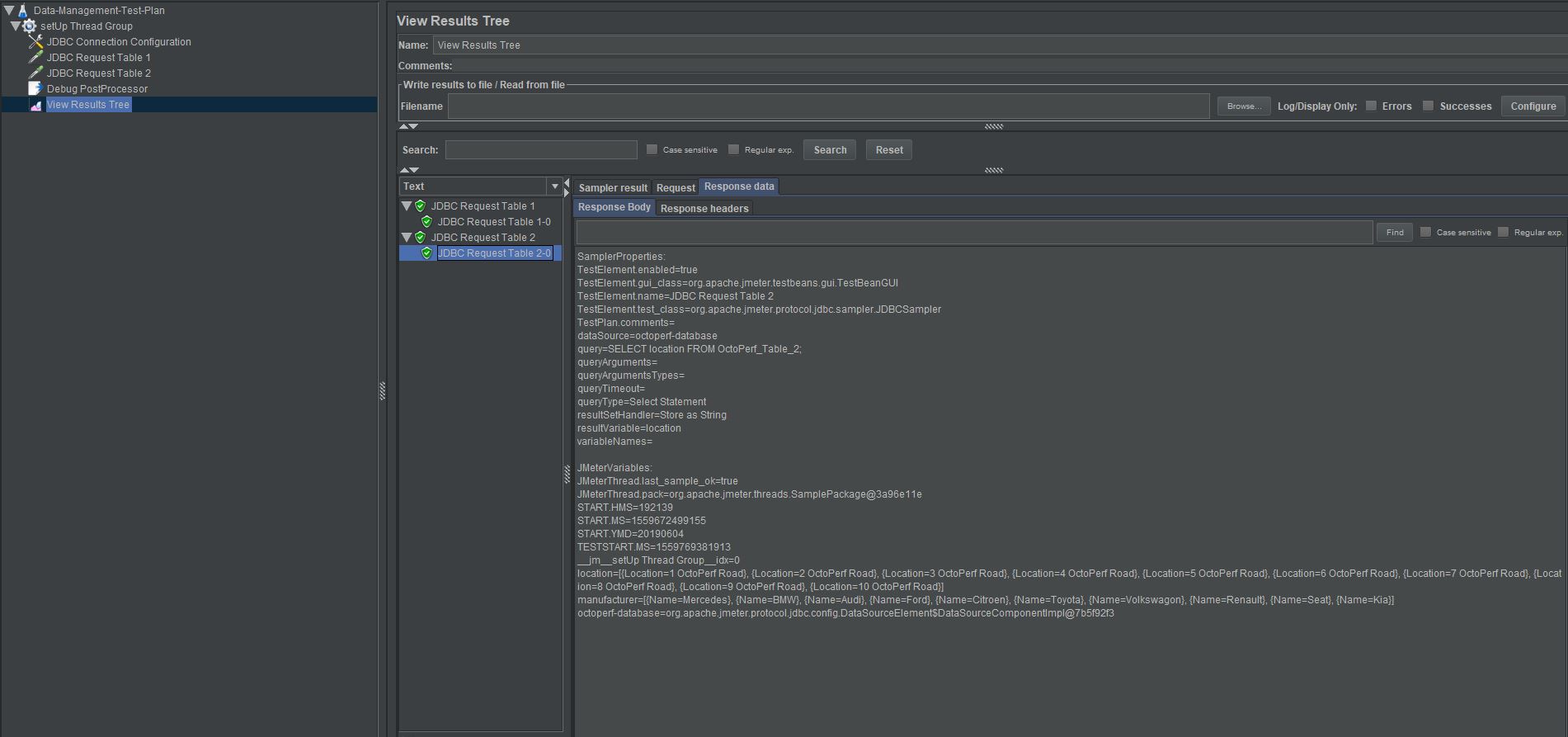

Our Results Tree Listener shows us the result of our query in the Response Body.

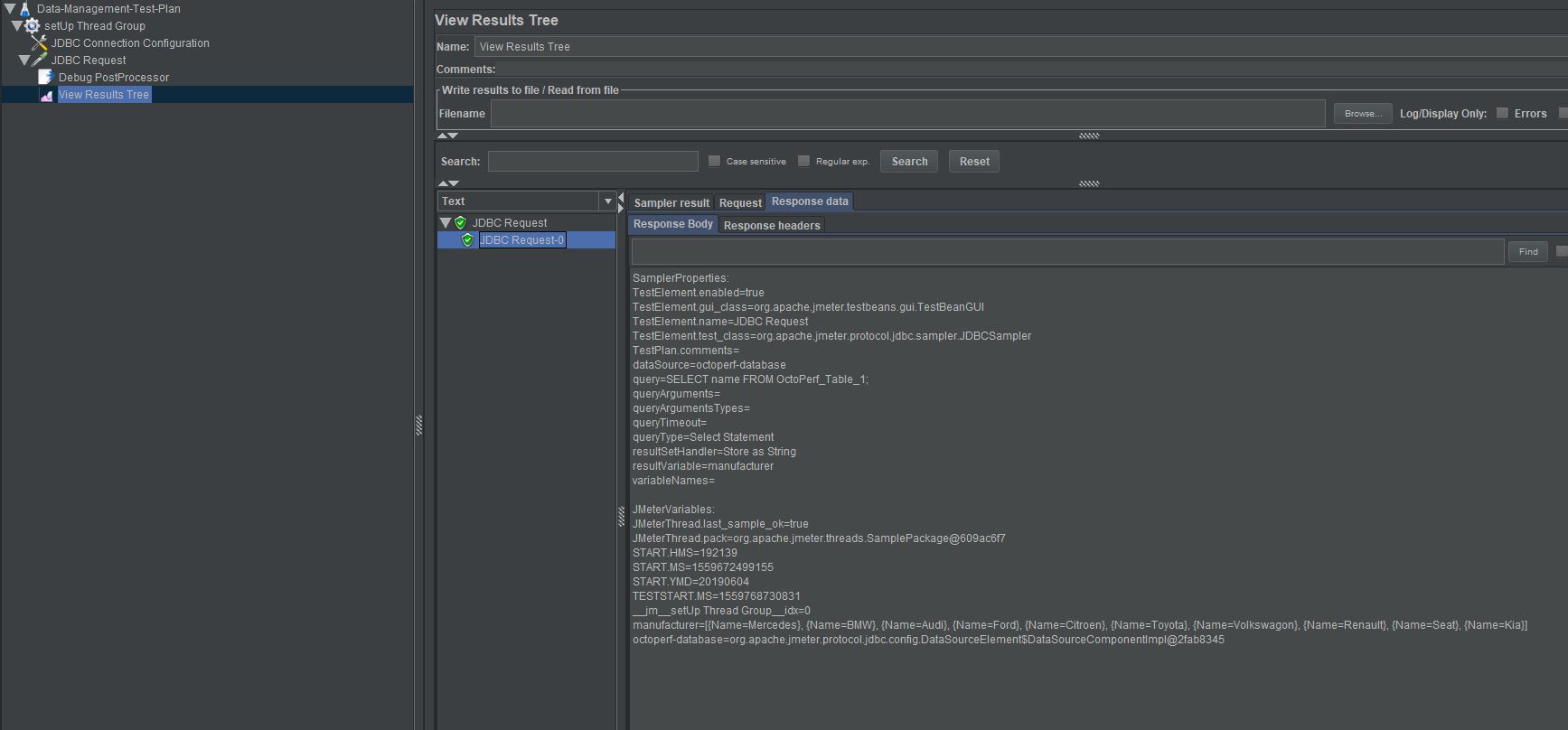

Our Debug Post Processor shows us the contents of our result object variable manufacturer which contains our table values as an object array.

manufacturer=[{Name=Mercedes}, {Name=BMW}, {Name=Audi}, {Name=Ford}, {Name=Citroen}, {Name=Toyota}, {Name=Volkswagon}, {Name=Renault}, {Name=Seat}, {Name=Kia}]

Great we are now extracting data from our first table, lets add the second table.

This is identical with the exception of the SELECT statement and the results object variable which is called location

Our Debug Post Processor shows us the contents of our result object variables manufacturer and location

location=[{Location=1 OctoPerf Road}, {Location=2 OctoPerf Road}, {Location=3 OctoPerf Road}, {Location=4 OctoPerf Road}, {Location=5 OctoPerf Road}, {Location=6 OctoPerf Road}, {Location=7 OctoPerf Road}, {Location=8 OctoPerf Road}, {Location=9 OctoPerf Road}, {Location=10 OctoPerf Road}]

manufacturer=[{Name=Mercedes}, {Name=BMW}, {Name=Audi}, {Name=Ford}, {Name=Citroen}, {Name=Toyota}, {Name=Volkswagon}, {Name=Renault}, {Name=Seat}, {Name=Kia}]

Ok lets complete the set-up activity and write these database query results to a flat file for use in the rest of our test.

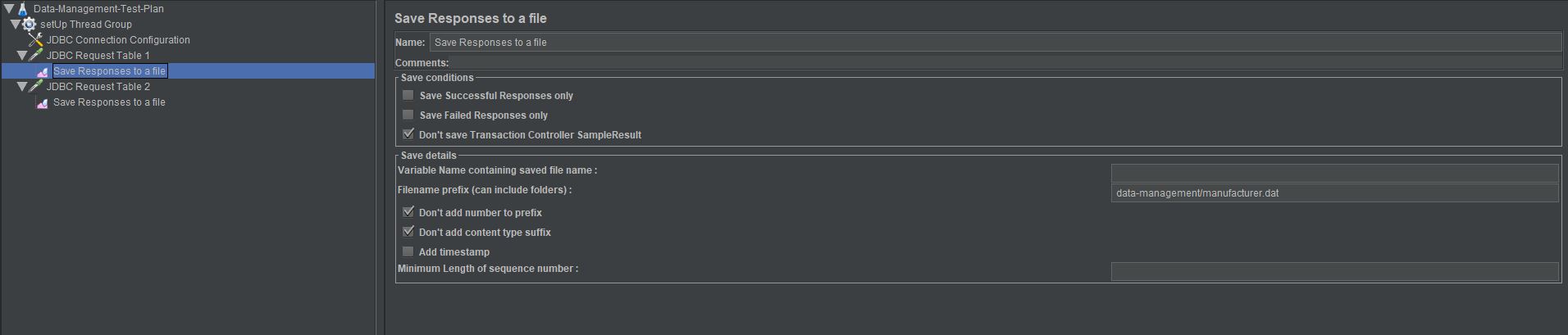

There are many ways to accomplish this in JMeter but for simplicity we will use a Save Response to a file Listener and we'll include one for each JDBC Request Sampler.

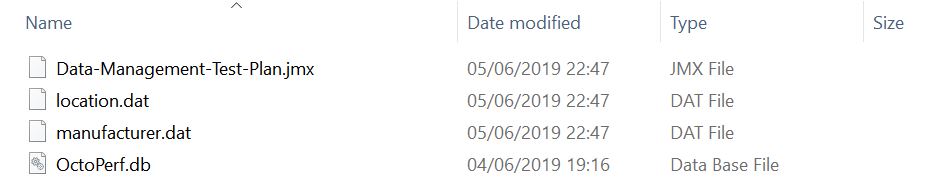

The listener highlighted in the example writes to a file called manufacturer.dat in the data-management folder and the location dataset writes to a file called location.dat in the same folder.

And they each contain the output of these query's.

Thats the end of the setUp Thread Group, that wasn't so difficult was it.

Execute¶

Now in a real life situation you will be testing some form of application as part of the execute stage but for our example we will use a Dummy Sampler to simulate a request using our data files that we have generated.

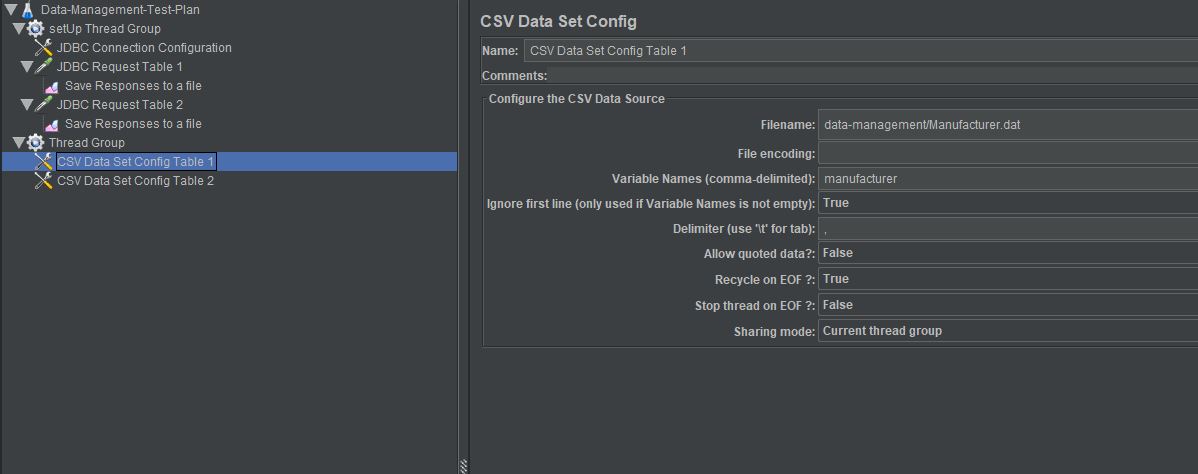

First of all lets add the CSV Data Set Config to read the files we will create, as they will not exist until the setUp Thread Group has been executed.

The highlighted entry in the example below is the manufacturer values, the other is the locations.

Note we have set the Ignore first line (only used if Variable Names is not empty) to ensure we ignore the first line of our files that contains the table name we originally extracted the data from.

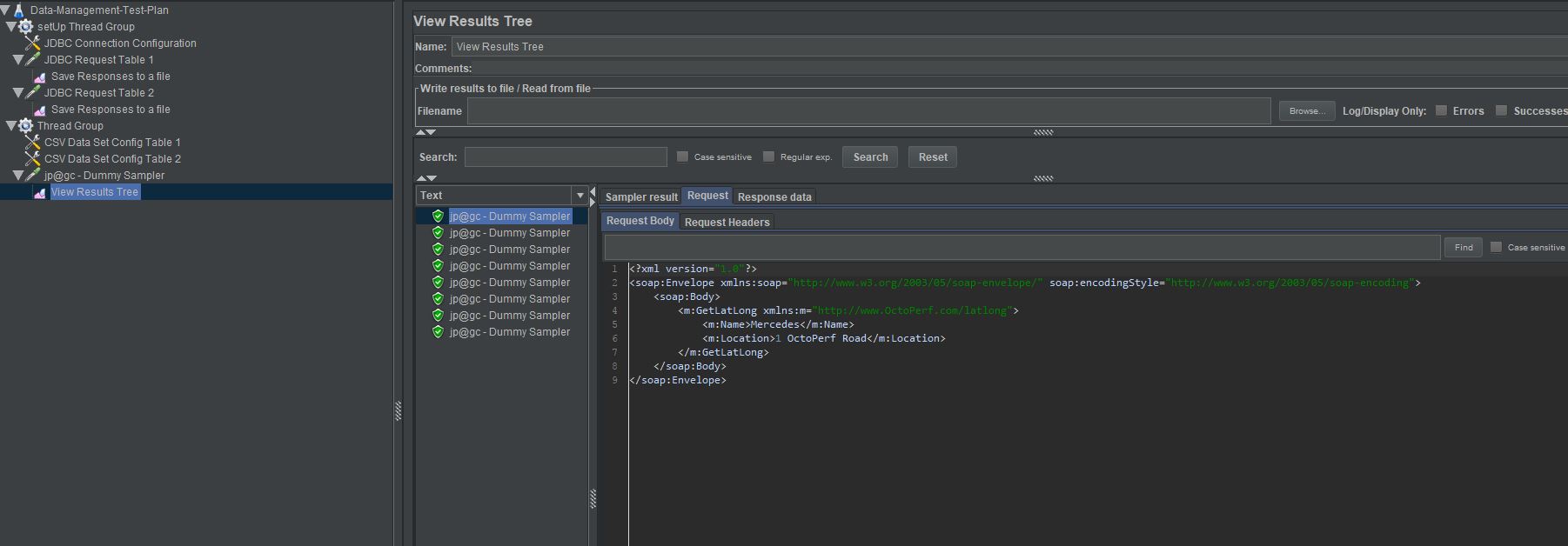

For our Dummy Sampler we will create a simple XML Request and Response to demonstrate the use of the data we have extracted from our database.

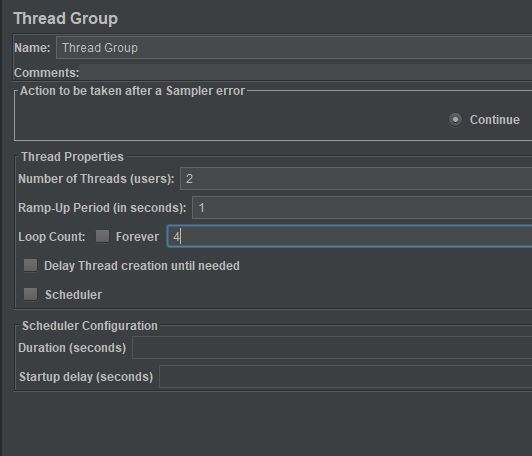

Lets add a View Results Tree Listener and run our test, for simplicity we will configure our Thread Group to have 2 Threads and Iterate 4 times each and run our test.

We can see our request contains the values from our database tables and as this is a very artificial request and response test scenario we will not spend any more time on it.

TearDown¶

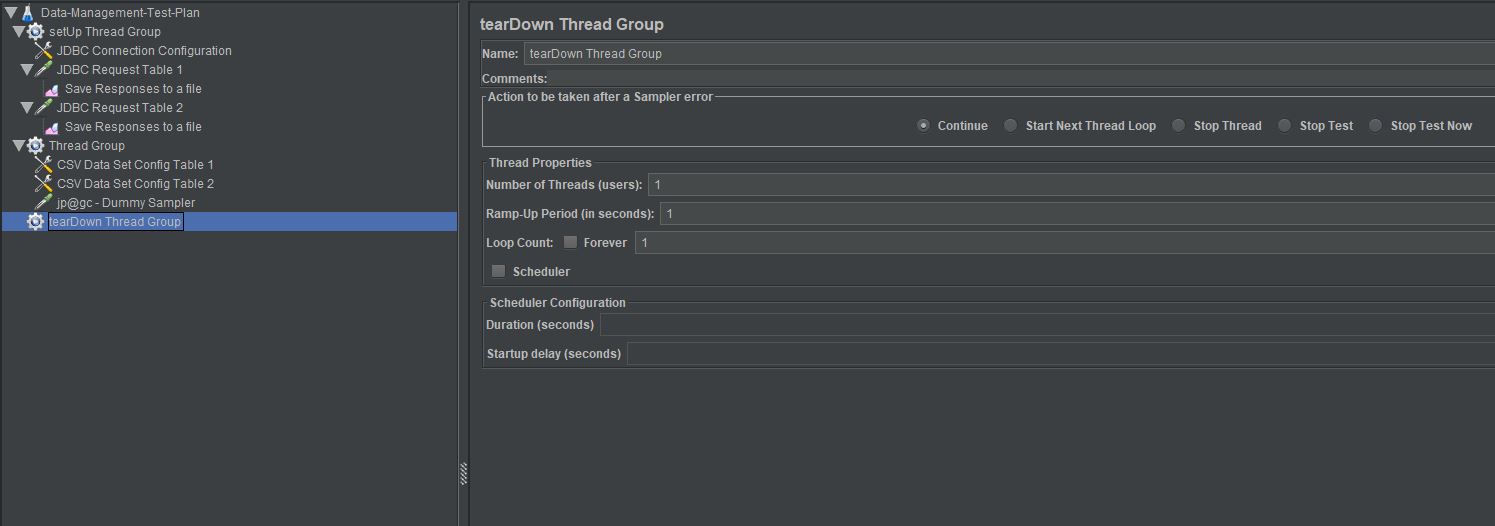

When dynamically generating data and wanting to re-run tests with fresh data for each execution you need to do a bit of housekeeping once your test completes and the simplest way to do this is to add a tearDown Thread Group to our test.

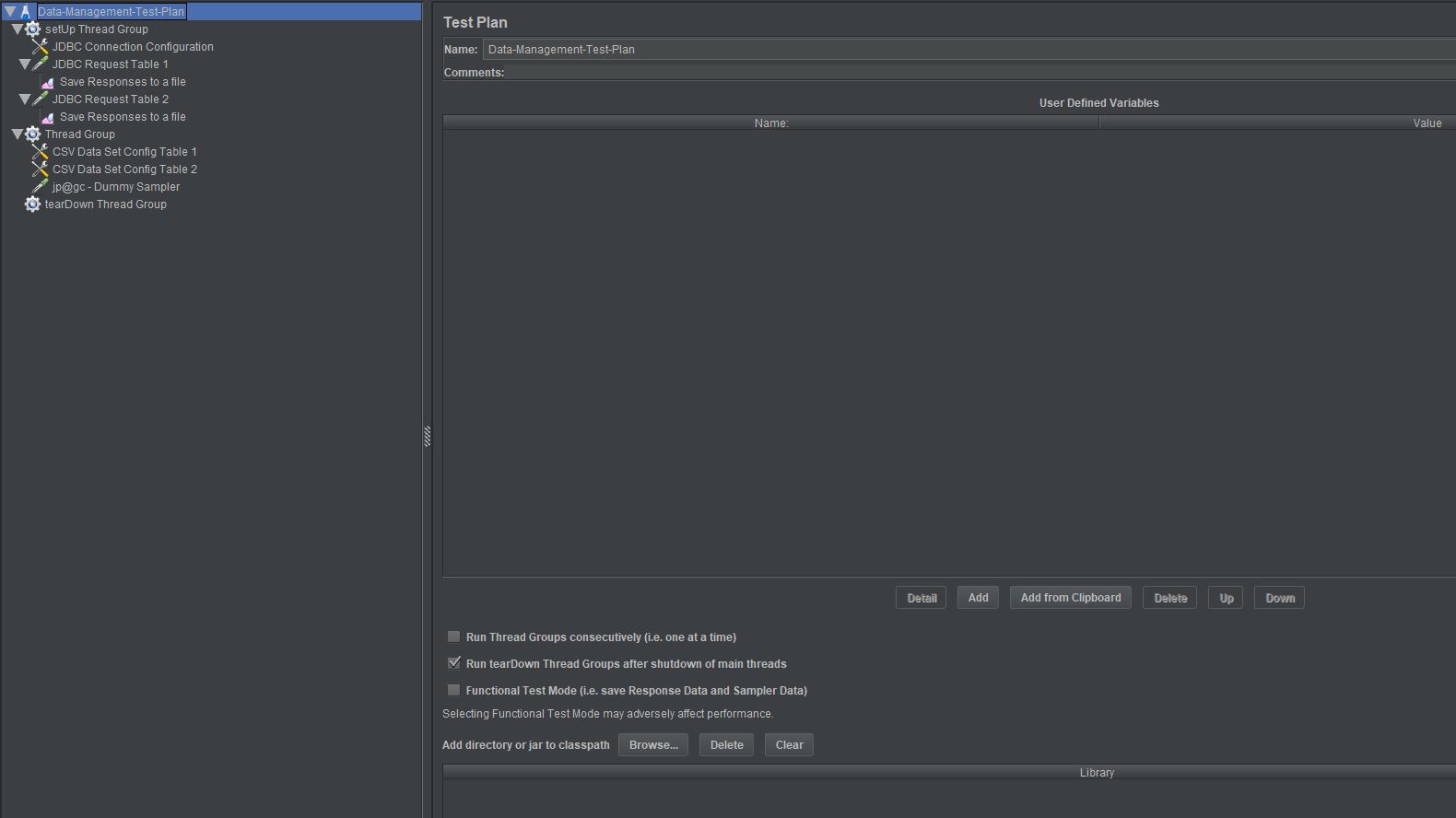

Just a note to make sure that under the Test Plan the Run tearDown Thread Groups after shutdown of main threads checkbox is selected as this means this part of the Test Plan

is not run until the test execution has completed.

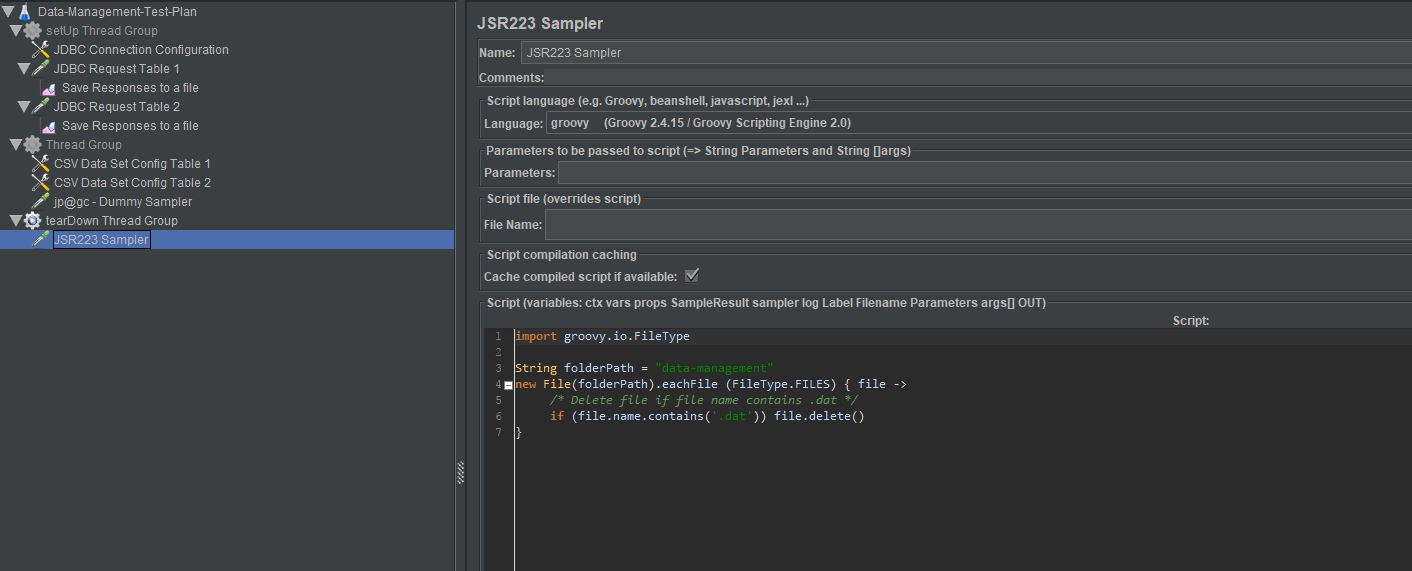

For our test we have created the only housekeeping comes in the form of deleting the 2 flat files we created in the setUp Thread Group.

The easiest way to do this is to use a JSR223 Sampler and some Groovy.

Clearly the tearDown Thread Group could do much more including tidying any data that might have been written to the database during the test in order to keep your test environments clear of data created during your test execution.

So what we are going to do is demonstrate how this can be accomplished as its a powerful way of ensuring that your tests leave the environment in the same position it was in before execution meaning you are always tests against the same dataset volumes.

Now clearly to delete data that has referential integrity across multiple tables is not always possible but where no, or simple, relationships exists and it is possible then it is an option so worth discussing.

Firstly we need to create a new table in our database which we will use to write data to from our Test Plan

Now lets create a third table using the following commands, this time a audit table for each execution of our test.

CREATE TABLE OctoPerf_Table_3 (Id Int, Audit_Data Varchar);

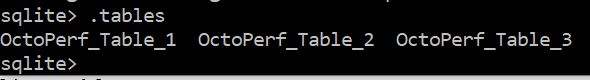

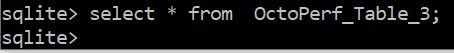

If we run the .tables command we see three tables.

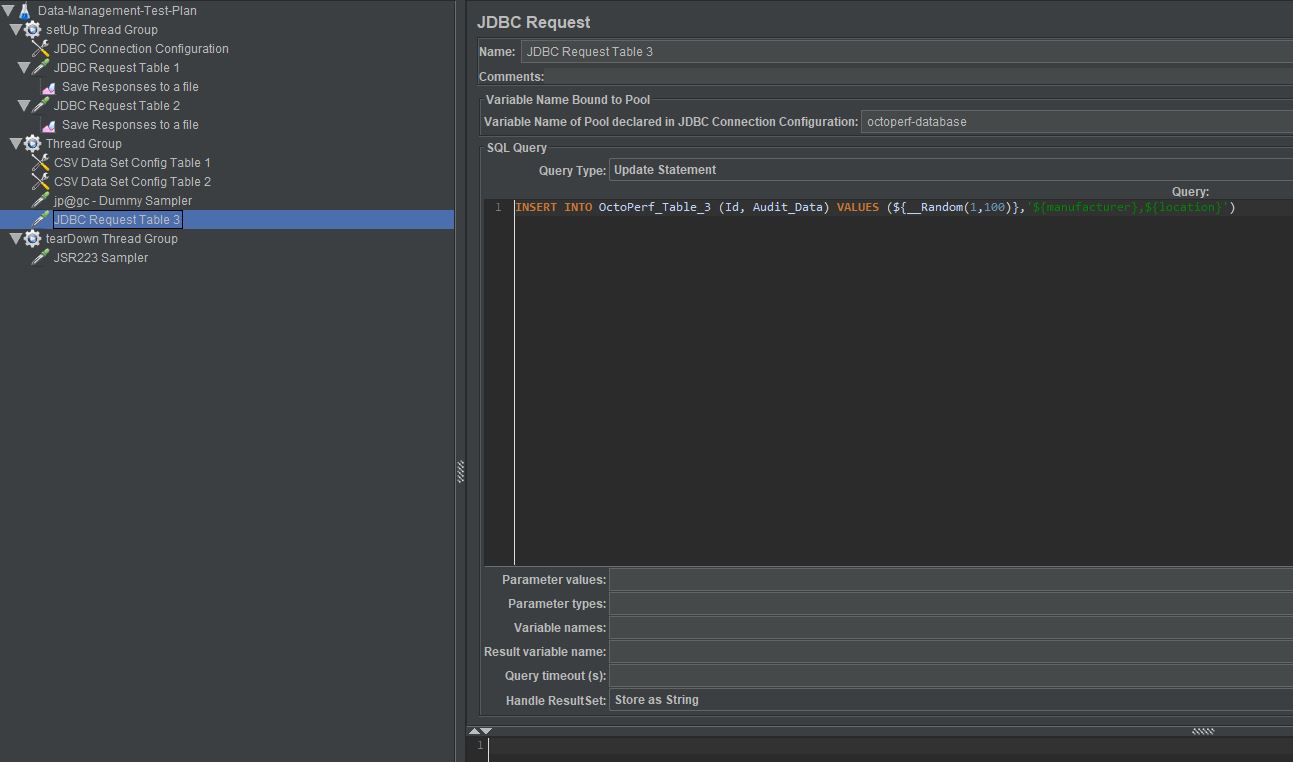

Now lets insert data into this table during the execution of our test by adding another JDBC Request Sampler with an Insert statement

We will not discuss the inserting of data into a database as part of this Blog Post as this is not its purpose, the example above is pretty self explanatory.

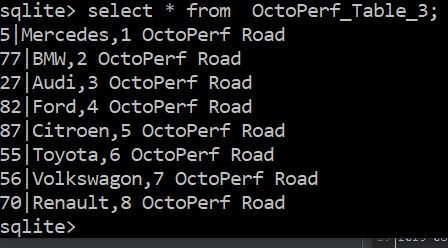

If we run our Test Plan again and run this statement against our database.

SELECT * from OctoPerf_Table_3;

We see that our test has populated OctoPerf_Table_3 with some data from our test.

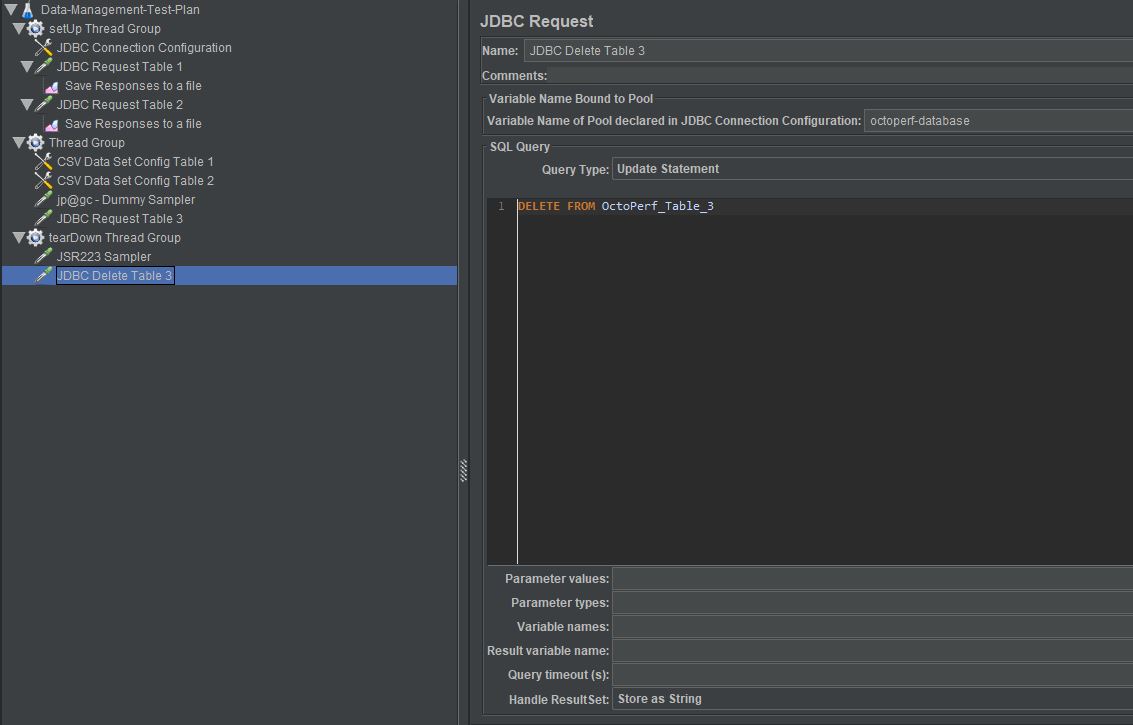

So now we have demonstrated that we can populate this table we can now add the truncating of data from it to our tearDown Thread Group to show how we can tidy data at the end of a test.

We add another JDBC Request Sampler with a DELETE statement, you must remember to use octoperf-database as your pool name and change Query Type to be an Update Statement

Now upon re-running our Test Plan we can see that OctoPerf_Table_3 is empty on completion of the test.

Conclusion¶

Now obviously this is a simple example but the same principles apply to much more complex designs.

Really we are just trying to demonstrate that by being in control of the data you use in your performance testing will make your tests easier to maintain and give you much more complex and diverse data to work with.

It also makes your tests portable across any environment and does not rely on data to be provided by business resources giving you a much more agile approach to performance testing.

The JMeter test plan, SQLite database and the flat files used in this Blog Post are available below, enjoy.