Scheduling Docker containers on a cluster

Docker makes it incredibly easy to run a variety of processes on a cluster of machines. Rancher offers container orchestration on top of Docker. Rancher allows you to manage a cluster of Docker-enabled machines. We use Rancher extensively at Octoperf to run JMeter containers on machines all over the world. We quickly faced an issue with Rancher here: container scheduling.

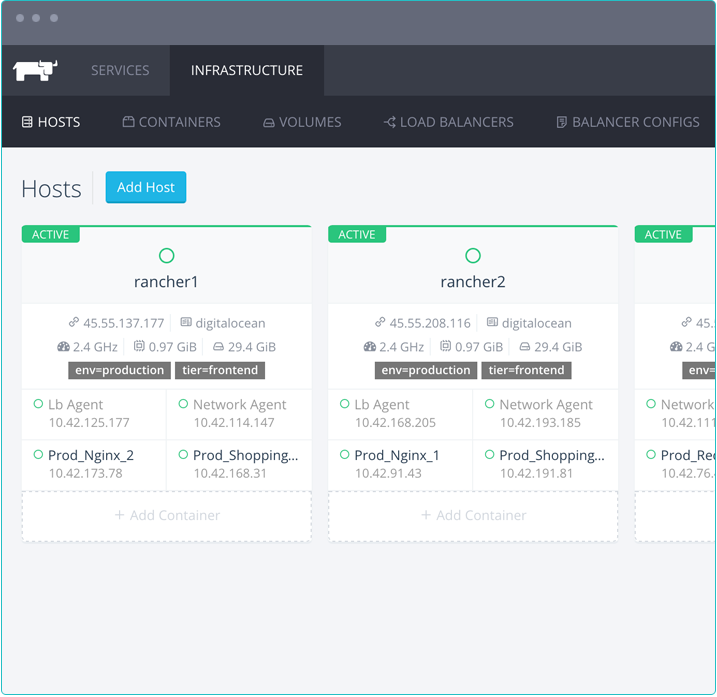

rancher-infrastructure

When you have a single machine running your docker container, everything is simple. You can run containers until the machines resources (CPU, RAM) are depleted. When you start working with 2+ nodes, you start to ask yourself new questions:

- What is the optimal way to run containers on a cluster of machines?

- How do I maximize resources utilization?

Rancher default scheduling¶

Rancher has built-in Scheduling constraints which allow you to place docker containers on the host you want.

By default, Rancher places the docker container on host running the least number of containers. The problem is that Rancher does not take into account the container cpu and memory requirements. If you let Rancher decide where to place your containers.

This kind of scheduling is known as Spread scheduling. It works fine in a perfect world where all your containers have the same resource requirements and your nodes are all the same. They have certainly made this choice because they expect people to place containers manually on hosts, or because it was simple to implement.

As a Saas service, we cannot afford dedicating a 8h rolling team to make the perfect container scheduling by hand. Saas services must run 24h a day, without any manual intervention.

We need to find a practical algorithm to place our customer docker containers on our cluster of nodes automatically in a reasonable time frame.

Identifying the problem¶

You have started touching the problem's surface. At Octoperf, our customers run load tests with JMeter. For heavy load tests, you quickly need to spread the load on several nodes by splitting the big load test task into smaller ones. We want to use the least possible machines to run all the tasks.

Each time you encounter a hard problem, you should ask yourself if it's an already know class of problem. It appears that placing docker containers on a cluster of nodes is a well-known problem: the binpack problem. At Octoperf, the challenge is to maximize node utilization to minimize cost. We want to run the least possible nodes executing our customer JMeter containers to maximize profit.

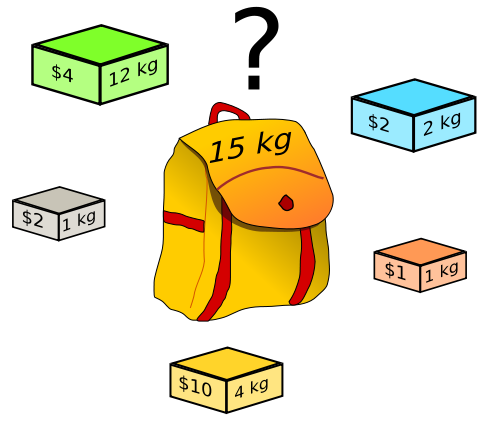

Binpack problem¶

Suppose your Docker containers are goods of different sizes, and your docker nodes are volumes of different sizes where you want to fit those goods. Sadly, finding the optimal solution to this problem takes exponential time: you must explore all the combinations of placement to see which one suits best.

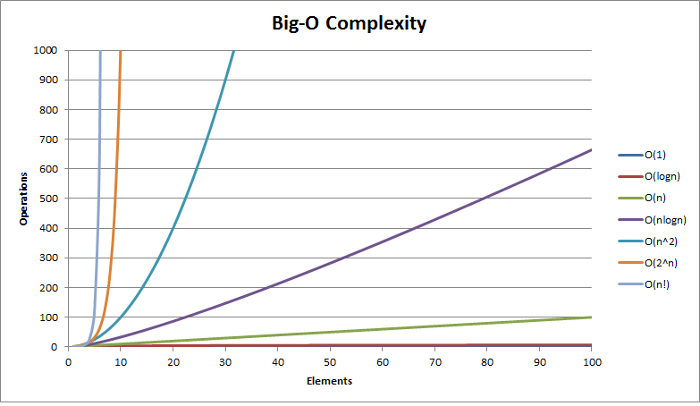

Algorithm complexity comparison¶

The binpack problem is an NP-Complete problem. It means there is no algorithm which finds the optimal solution to this problem in polynomial time complexity. Finding the optimal solution to this combinatorial problem can quickly become impractical.

With just 10 containers to place on a 10 machines cluster, the algorithm is already going to take minutes to find the best answer. While our customers like to spend time using our application, they will surely appreciate if we can place their tasks on our cluster of machines in a reasonable time.

Approximate solution¶

Now we know that our problem is a well-known problem whose exact solution is almost impossible to get before we are all dead. The good news is that there are Heuristhics to solve this problem.

An Heuristic trades solution quality in favor of execution time. It will give you a sub-optimal solution in a reasonable timeframe. After all, we can accomodate to launch a few more machines on Amazon

First Fit Decreasing Binpack¶

The FFD algorithm is pretty well to solve the Binpack problem.

First, we order the containers by decreasing memory requirements. In our case, the CPU doesn't matter. As we have heterogeneous nodes (unlike in the presentation above), we sort them by decreasing available resources. Then, we try to place each container one-by-one on the nodes.

What happens if the tasks require more nodes than available? We simply simulate as if we have added another node to the cluster, and run the solver again. As soon as all the containers could be placed, we know how many nodes we need to start to meet the demand.

This algorithm is really easy to implement and gives pretty good results. Studies have shown that this algorithm doesn't use more than 11/9 OPT + 1 nodes, where OPT is the optimal solution.

Suppose we need to launch a load test which requires 10 machines in the optimal case, we will end up launching about 13 nodes in worst case. That's roughly 30% more resources than optimal consumed. But, it's acceptable since machines are only launched for the duration of the load test. EC2 instances are also pretty cheap when you need them only for a few hours.

On most cases, FFD shows to use no more than 11/9 OPT nodes. That's about 22% more nodes than needed.

Better algorithms¶

There are better algorithms to solve the binpack problem, like the harmonic algorithm. Sure, it will probably give solutions which are nearer to the optimal solution, but at the expense of maintainability.

Optimisation always comes at the cost of maintainability in software development. We have deliberately made the choice to select the easiest and good enough algorithm to solve our problem.

Java Implementation¶

Here is how our First Fit Decreasing looks like once implemented in Java 8:

As you can see, it takes less that 100 lines of code and is extremely easy to read. This service takes as input:

- computers: list of computers, equivalent to cloud nodes,

- processes: list of docker containers to place.

It returns the computers with processes it could assign to them. Processes that could not fit into the list of computers fall into the remainings list.

Back to Rancher¶

Thanks to this simple algorithm, we assign docker container automatically to given hosts using Rancher's host affinity labels.

Conclusion¶

It always pays off to have some basic knowledge about existing algorithmic problems and their possible solutions. It's just a matter of transposing your problem to a similar existing problem and using solutions that apply to them.

Feel free to tell here which problems you have encountered and how you solved them!